CipherBank: Exploring the Boundary of LLM Reasoning Capabilities through Cryptography Challenges

Yu Li, Qizhi Pei, Mengyuan Sun, Honglin Lin, Chenlin Ming, Xin Gao, Jiang Wu, Conghui He, Lijun Wu

2025-04-29

Summary

This paper talks about CipherBank, a project that tests how well large language models can solve puzzles related to breaking codes and ciphers, which is a big part of cryptography.

What's the problem?

The problem is that while language models are good at many tasks, it's unclear how well they can handle really tough reasoning challenges, like figuring out secret messages that have been encrypted in different ways. These kinds of problems require strong logic skills and the ability to spot patterns, which are not easy for AI.

What's the solution?

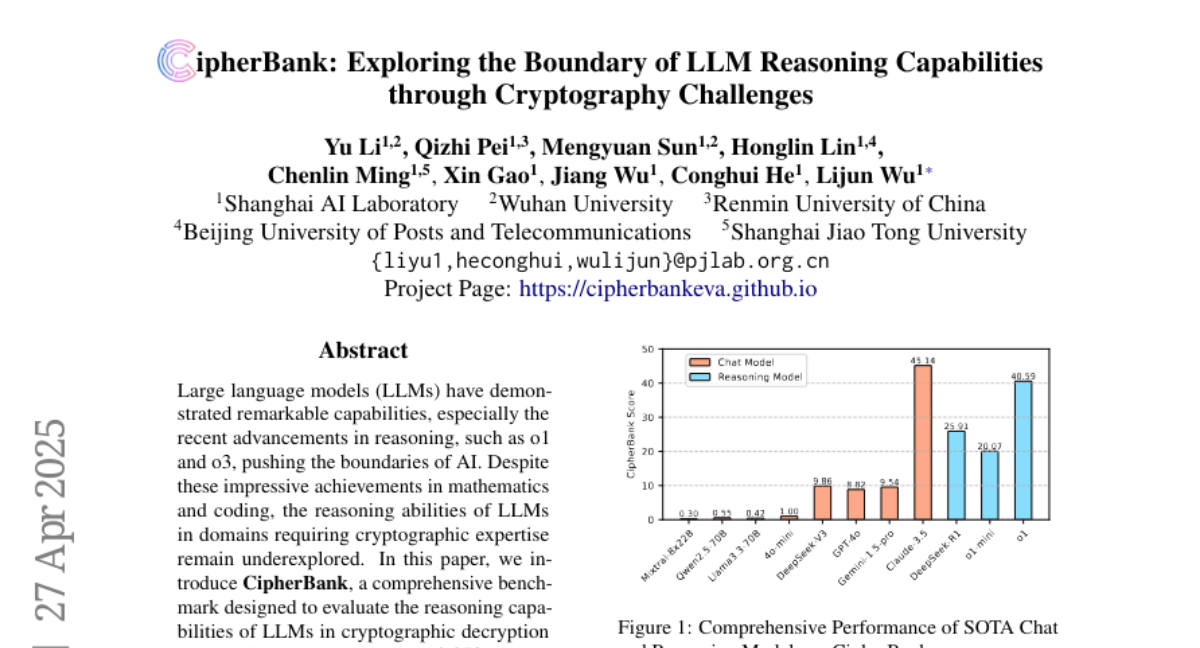

The researchers created CipherBank, a set of tests with both classic and new types of encrypted messages, and used it to see how well these AI models could crack the codes. They found that the models still have a lot of trouble with these tasks and make many mistakes, especially with more complicated or unusual ciphers.

Why it matters?

This matters because it shows the limits of current AI when it comes to advanced reasoning and problem-solving, especially in areas like security and cryptography. Understanding these weaknesses helps researchers know what needs to be improved for future AI systems.

Abstract

CipherBank evaluates the reasoning capabilities of large language models in cryptographic decryption tasks, revealing significant gaps in their performance across classical and custom encryption methods.