CLaSp: In-Context Layer Skip for Self-Speculative Decoding

Longze Chen, Renke Shan, Huiming Wang, Lu Wang, Ziqiang Liu, Run Luo, Jiawei Wang, Hamid Alinejad-Rokny, Min Yang

2025-06-02

Summary

This paper talks about CLaSp, a new technique that makes large language models generate text faster by skipping over some of the steps inside the model, without needing extra training or extra parts.

What's the problem?

The problem is that while speculative decoding can speed up how quickly language models produce text, most methods require building or training extra modules, which makes things more complicated and less flexible for different models and tasks.

What's the solution?

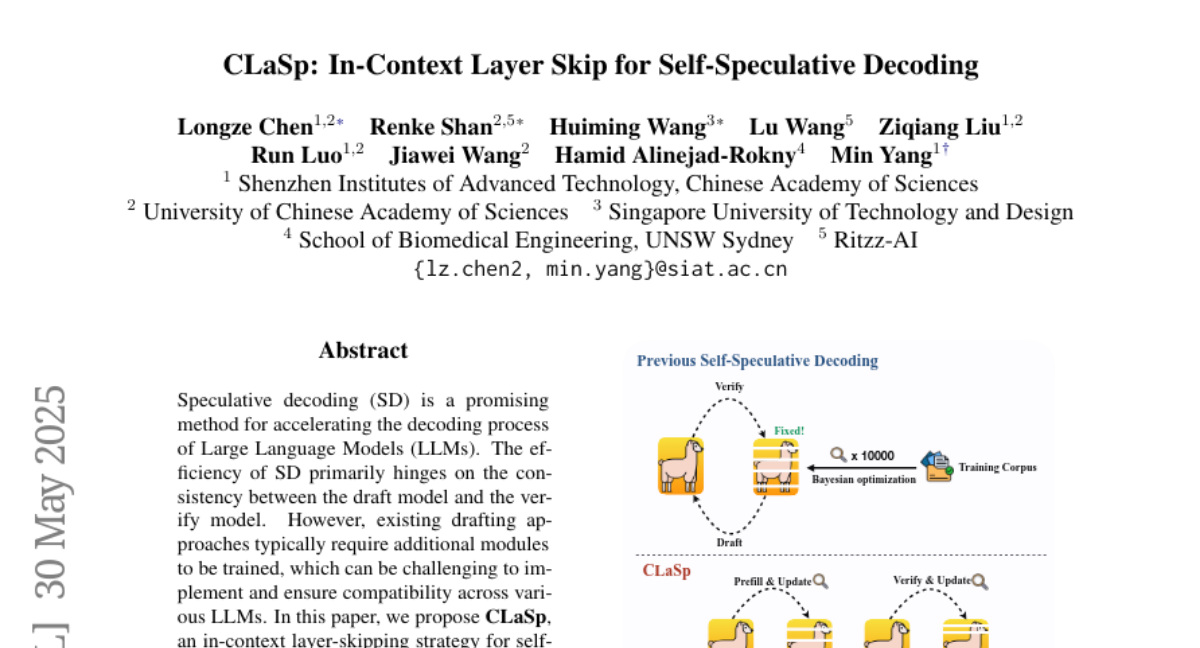

The researchers created CLaSp, which skips certain layers inside the model as it generates text, using a smart algorithm to decide which steps to skip based on the current context. This method doesn't need any new modules or extra training and can adjust itself as it goes, making the process much faster while keeping the quality of the text the same.

Why it matters?

This is important because it means AI models can answer questions and generate text more quickly, saving time and computer power, which is useful for everything from chatbots to large-scale research.

Abstract

CLaSp, an in-context layer-skipping strategy for self-speculative decoding, accelerates Large Language Model decoding without additional modules or training, achieving a 1.3x to 1.7x speedup on LLaMA3 models.