Cockatiel: Ensembling Synthetic and Human Preferenced Training for Detailed Video Caption

Luozheng Qin, Zhiyu Tan, Mengping Yang, Xiaomeng Yang, Hao Li

2025-03-17

Summary

This paper introduces Cockatiel, a method to improve AI's ability to create detailed video captions that align with what humans prefer.

What's the problem?

Current AI models that create captions for videos often have two main issues: they are biased towards certain aspects of captioning, and they don't always align with what humans find preferable in a caption.

What's the solution?

Cockatiel uses a three-stage training process. First, it uses a special scorer to select synthetic captions that are good at both describing the video accurately and matching human preferences. Then, it trains a large AI model (Cockatiel-13B) on this curated dataset. Finally, it creates a smaller, more efficient model (Cockatiel-8B) from the larger model.

Why it matters?

This work matters because it improves the quality and human-friendliness of AI-generated video captions, making them more useful and enjoyable for people to use.

Abstract

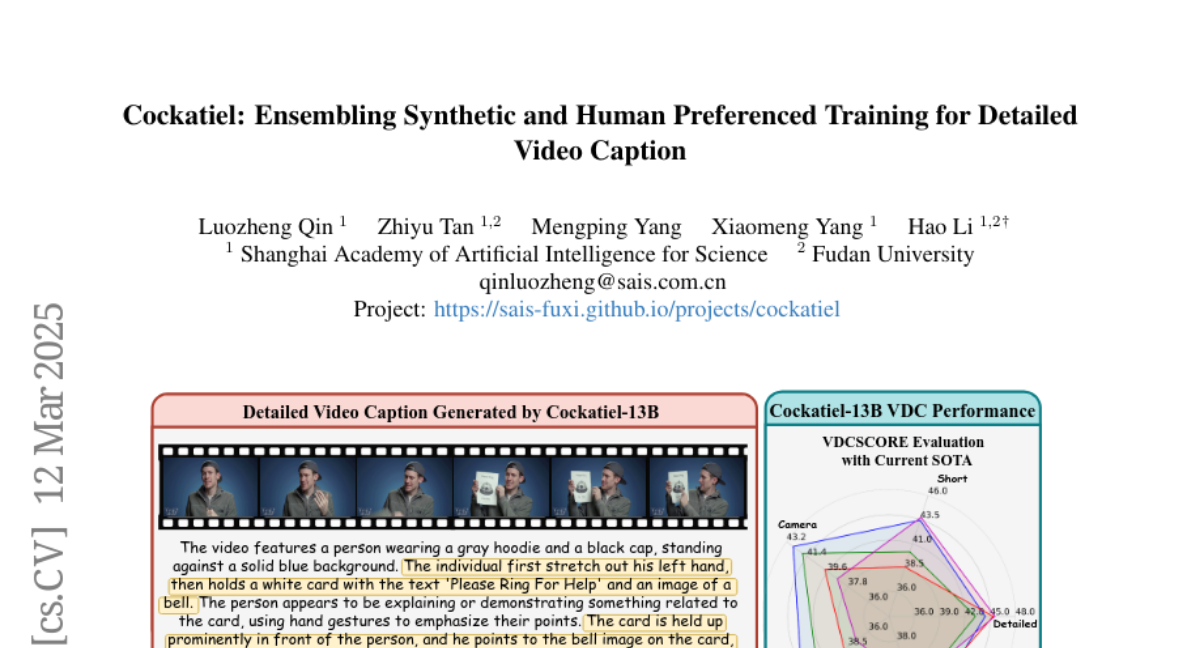

Video Detailed Captioning (VDC) is a crucial task for vision-language bridging, enabling fine-grained descriptions of complex video content. In this paper, we first comprehensively benchmark current state-of-the-art approaches and systematically identified two critical limitations: biased capability towards specific captioning aspect and misalignment with human preferences. To address these deficiencies, we propose Cockatiel, a novel three-stage training pipeline that ensembles synthetic and human-aligned training for improving VDC performance. In the first stage, we derive a scorer from a meticulously annotated dataset to select synthetic captions high-performing on certain fine-grained video-caption alignment and human-preferred while disregarding others. Then, we train Cockatiel-13B, using this curated dataset to infuse it with assembled model strengths and human preferences. Finally, we further distill Cockatiel-8B from Cockatiel-13B for the ease of usage. Extensive quantitative and qualitative experiments reflect the effectiveness of our method, as we not only set new state-of-the-art performance on VDCSCORE in a dimension-balanced way but also surpass leading alternatives on human preference by a large margin as depicted by the human evaluation results.