CODA: Repurposing Continuous VAEs for Discrete Tokenization

Zeyu Liu, Zanlin Ni, Yeguo Hua, Xin Deng, Xiao Ma, Cheng Zhong, Gao Huang

2025-03-25

Summary

This paper is about making AI better at understanding and generating images by improving how it breaks down images into smaller, manageable pieces.

What's the problem?

AI models often struggle to effectively compress images into a compact form while also making them easily usable for tasks like image generation.

What's the solution?

The researchers developed a new method called CODA that separates the process of compressing images from the process of making them usable for AI. This allows the AI to focus on each task separately, leading to better results.

Why it matters?

This work matters because it can lead to AI models that are better at generating high-quality images and understanding visual information, which has applications in areas like image editing, computer vision, and content creation.

Abstract

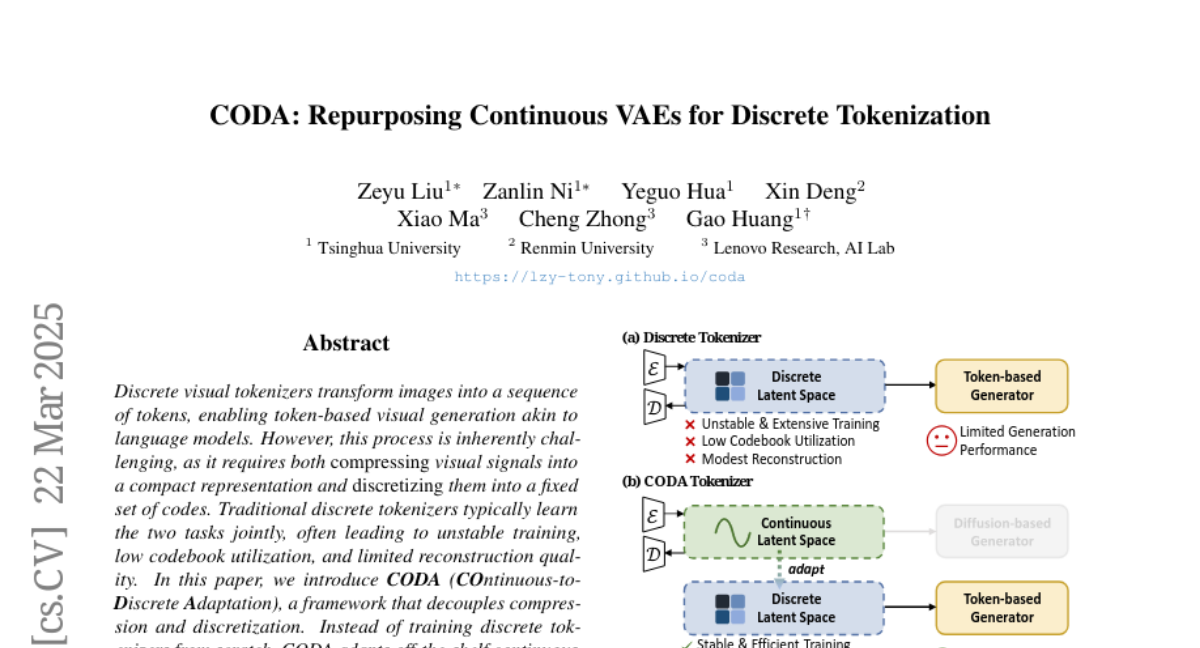

Discrete visual tokenizers transform images into a sequence of tokens, enabling token-based visual generation akin to language models. However, this process is inherently challenging, as it requires both compressing visual signals into a compact representation and discretizing them into a fixed set of codes. Traditional discrete tokenizers typically learn the two tasks jointly, often leading to unstable training, low codebook utilization, and limited reconstruction quality. In this paper, we introduce CODA(COntinuous-to-Discrete Adaptation), a framework that decouples compression and discretization. Instead of training discrete tokenizers from scratch, CODA adapts off-the-shelf continuous VAEs -- already optimized for perceptual compression -- into discrete tokenizers via a carefully designed discretization process. By primarily focusing on discretization, CODA ensures stable and efficient training while retaining the strong visual fidelity of continuous VAEs. Empirically, with 6 times less training budget than standard VQGAN, our approach achieves a remarkable codebook utilization of 100% and notable reconstruction FID (rFID) of 0.43 and 1.34 for 8 times and 16 times compression on ImageNet 256times 256 benchmark.