CODE: Confident Ordinary Differential Editing

Bastien van Delft, Tommaso Martorella, Alexandre Alahi

2024-08-26

Summary

This paper introduces CODE, a new method for editing and enhancing images that can effectively work with noisy or out-of-distribution (OoD) images without needing specific training.

What's the problem?

When trying to edit images, especially those that are noisy or not typical, existing methods struggle to balance keeping the original details while making the images look realistic. This can lead to poor quality results when the input images are not ideal.

What's the solution?

The authors present Confident Ordinary Differential Editing (CODE), which uses a diffusion model to improve images based on their likelihood rather than relying on assumptions about what might be wrong with them. CODE does not require extra training or special adjustments for different types of corruptions, making it flexible and easy to use. It enhances images by focusing on the most probable details while ignoring less important information, leading to better overall results.

Why it matters?

This research is significant because it provides a new way to restore and edit images that can handle difficult conditions without needing extensive training or specific adjustments. This could be very useful in various fields such as photography, film, and any area where high-quality image editing is needed, especially when dealing with imperfect inputs.

Abstract

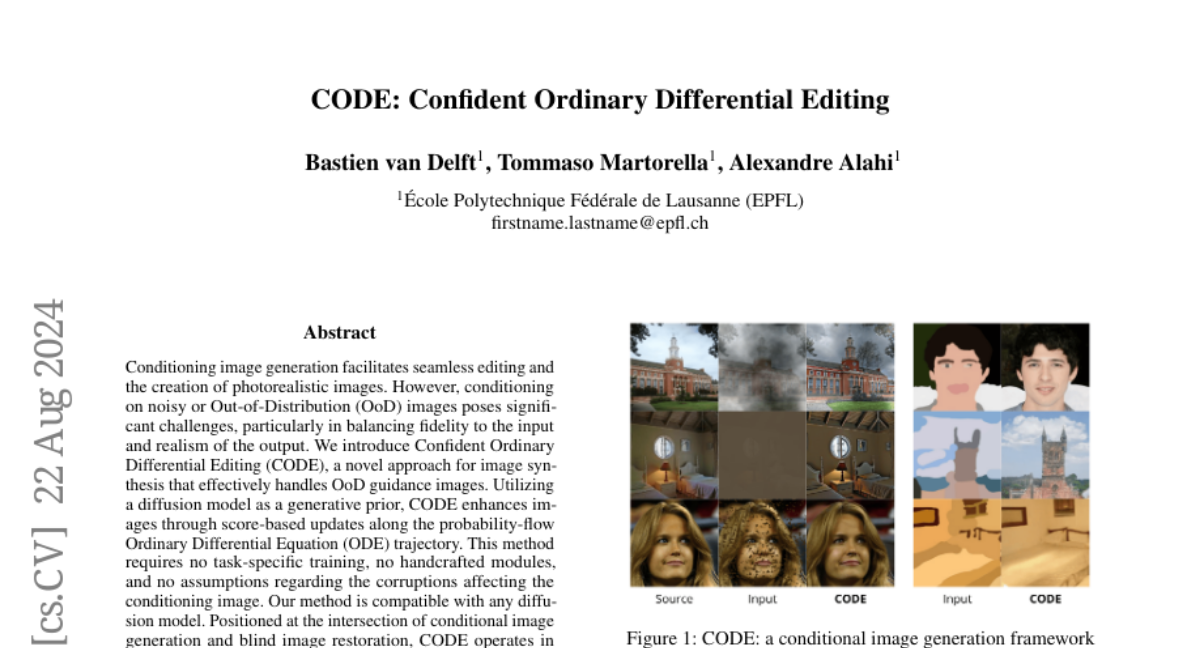

Conditioning image generation facilitates seamless editing and the creation of photorealistic images. However, conditioning on noisy or Out-of-Distribution (OoD) images poses significant challenges, particularly in balancing fidelity to the input and realism of the output. We introduce Confident Ordinary Differential Editing (CODE), a novel approach for image synthesis that effectively handles OoD guidance images. Utilizing a diffusion model as a generative prior, CODE enhances images through score-based updates along the probability-flow Ordinary Differential Equation (ODE) trajectory. This method requires no task-specific training, no handcrafted modules, and no assumptions regarding the corruptions affecting the conditioning image. Our method is compatible with any diffusion model. Positioned at the intersection of conditional image generation and blind image restoration, CODE operates in a fully blind manner, relying solely on a pre-trained generative model. Our method introduces an alternative approach to blind restoration: instead of targeting a specific ground truth image based on assumptions about the underlying corruption, CODE aims to increase the likelihood of the input image while maintaining fidelity. This results in the most probable in-distribution image around the input. Our contributions are twofold. First, CODE introduces a novel editing method based on ODE, providing enhanced control, realism, and fidelity compared to its SDE-based counterpart. Second, we introduce a confidence interval-based clipping method, which improves CODE's effectiveness by allowing it to disregard certain pixels or information, thus enhancing the restoration process in a blind manner. Experimental results demonstrate CODE's effectiveness over existing methods, particularly in scenarios involving severe degradation or OoD inputs.