ColorBench: Can VLMs See and Understand the Colorful World? A Comprehensive Benchmark for Color Perception, Reasoning, and Robustness

Yijun Liang, Ming Li, Chenrui Fan, Ziyue Li, Dang Nguyen, Kwesi Cobbina, Shweta Bhardwaj, Jiuhai Chen, Fuxiao Liu, Tianyi Zhou

2025-04-17

Summary

This paper talks about ColorBench, a set of tests designed to see how well AI models that understand both pictures and language can actually recognize and reason about colors in images.

What's the problem?

The problem is that even though color is really important for how humans see and understand the world, it's not clear if current AI models are good at noticing, understanding, or using color information the way people do. Most models are trained to recognize objects or answer questions, but they might miss or misunderstand color details, which can lead to mistakes.

What's the solution?

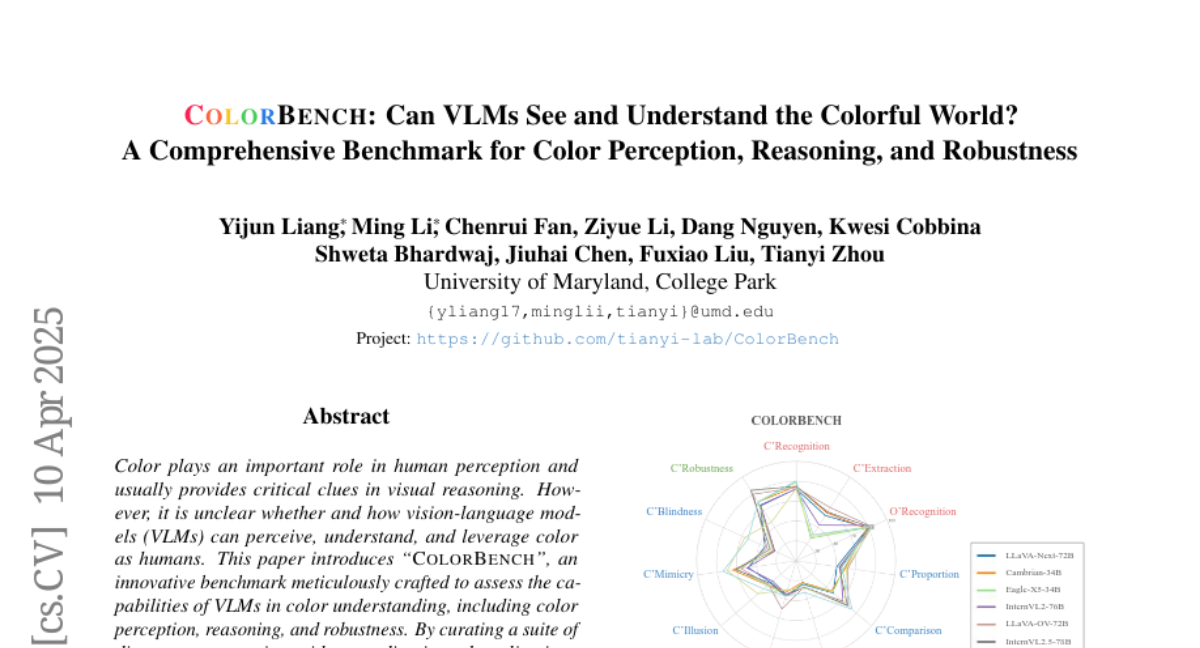

The researchers created ColorBench, a benchmark with a variety of tasks that check if these AI models can do things like identify colors, count objects of a certain color, and stay accurate even when colors are changed or used in tricky ways. They tested 32 different models and found that even the biggest and most advanced ones often struggle with color-related tasks, especially when it comes to counting colors or dealing with color illusions. They also found that having the AI explain its reasoning step by step helped it do better, but there are still big gaps in performance.

Why it matters?

This matters because color is a huge part of how we understand images in real life, from medical scans to shopping online. If AI models can't handle color well, they might make mistakes in important situations. ColorBench helps researchers see where the problems are so they can build smarter, more reliable AI that truly understands the colorful world like humans do.

Abstract

ColorBench evaluates vision-language models' color perception, reasoning, and robustness, revealing limitations and emphasizing the need for enhanced color comprehension in multimodal AI.