CoMP: Continual Multimodal Pre-training for Vision Foundation Models

Yitong Chen, Lingchen Meng, Wujian Peng, Zuxuan Wu, Yu-Gang Jiang

2025-03-26

Summary

This paper is about improving AI models that understand both images and text by training them continuously on new information.

What's the problem?

AI models that work with images often have a hard time understanding them in the same way that they understand text. They also might not be able to handle images of different sizes.

What's the solution?

The researchers developed a new method called CoMP that trains these AI models in a way that helps them better connect images and text, and also allows them to work with images of any size.

Why it matters?

This work matters because it can lead to AI models that are better at understanding the world around us, which could be useful for things like image search, self-driving cars, and virtual assistants.

Abstract

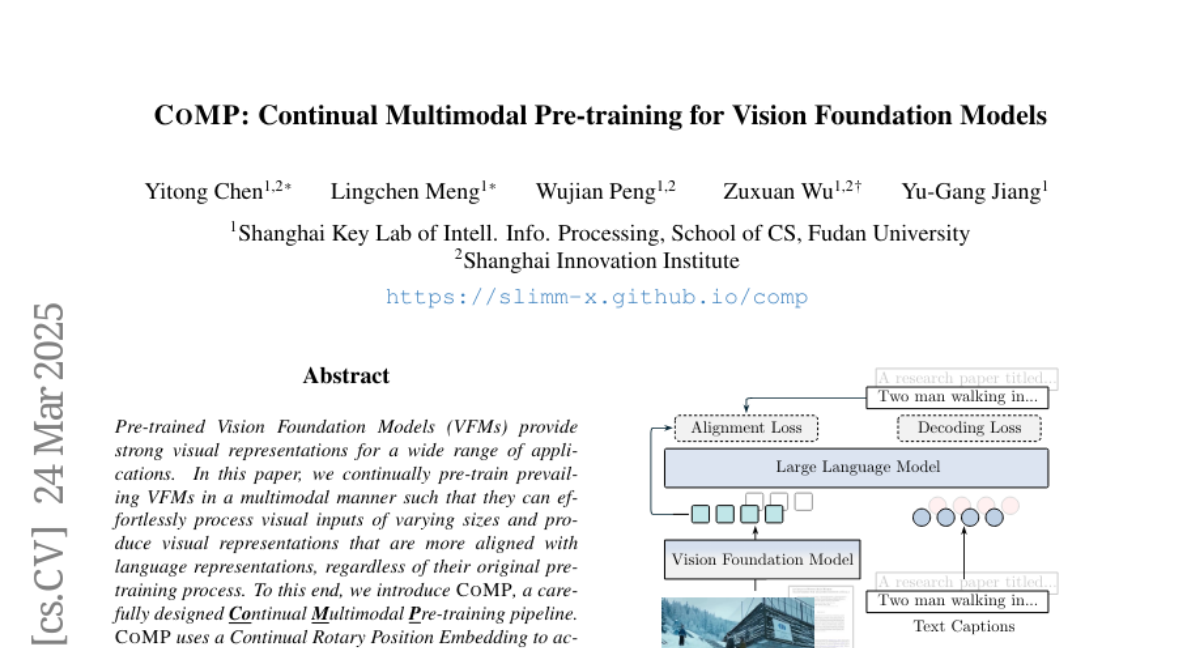

Pre-trained Vision Foundation Models (VFMs) provide strong visual representations for a wide range of applications. In this paper, we continually pre-train prevailing VFMs in a multimodal manner such that they can effortlessly process visual inputs of varying sizes and produce visual representations that are more aligned with language representations, regardless of their original pre-training process. To this end, we introduce CoMP, a carefully designed multimodal pre-training pipeline. CoMP uses a Continual Rotary Position Embedding to support native resolution continual pre-training, and an Alignment Loss between visual and textual features through language prototypes to align multimodal representations. By three-stage training, our VFMs achieve remarkable improvements not only in multimodal understanding but also in other downstream tasks such as classification and segmentation. Remarkably, CoMP-SigLIP achieves scores of 66.7 on ChartQA and 75.9 on DocVQA with a 0.5B LLM, while maintaining an 87.4% accuracy on ImageNet-1K and a 49.5 mIoU on ADE20K under frozen chunk evaluation.