CompeteSMoE -- Statistically Guaranteed Mixture of Experts Training via Competition

Nam V. Nguyen, Huy Nguyen, Quang Pham, Van Nguyen, Savitha Ramasamy, Nhat Ho

2025-05-21

Summary

This paper talks about CompeteSMoE, a new way to train large language models that uses a system of 'experts' competing with each other to make the model work better and more efficiently.

What's the problem?

Traditional AI models often try to solve every problem using the same approach, which can be slow and not very efficient, especially as models get bigger and tasks become more complicated.

What's the solution?

The researchers improved a method called sparse mixture of experts by adding a competition mechanism, so that only the most suitable 'experts' in the model are chosen to handle each part of a task, making the process faster and the results more accurate.

Why it matters?

This matters because it helps build smarter and more efficient AI systems, allowing them to tackle complex problems without needing as much computing power, which is important as AI gets used for more and more real-world applications.

Abstract

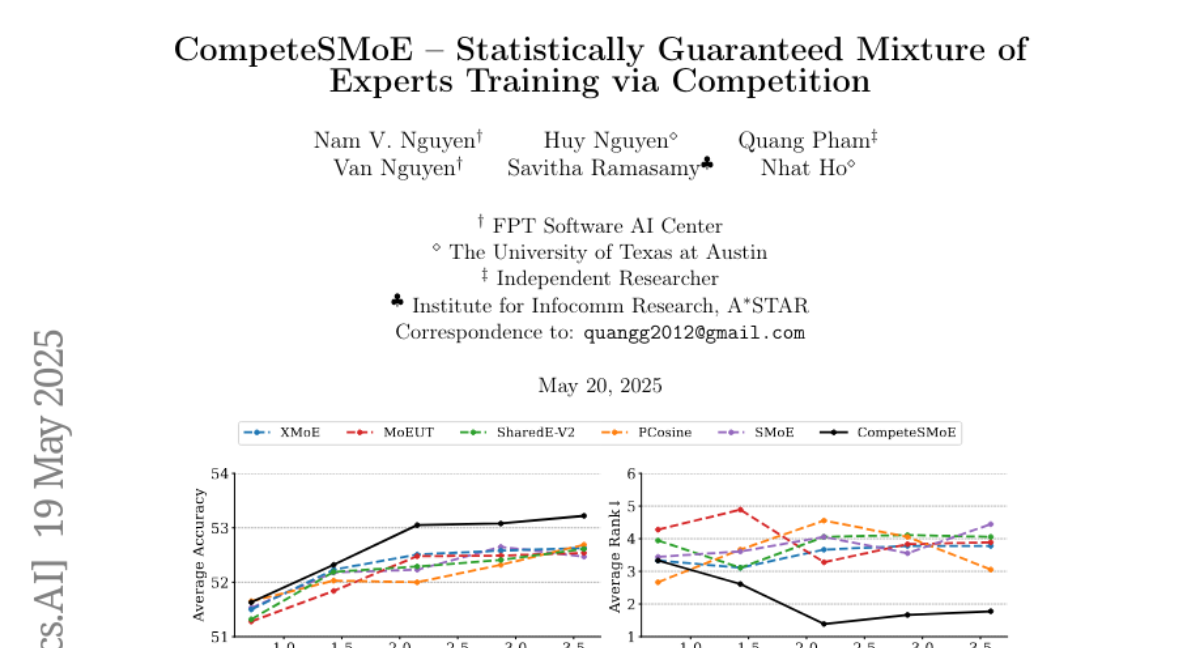

CompeteSMoE enhances sparse mixture of experts (SMoE) by introducing a competition mechanism to improve routing efficiency and model performance in large language models.