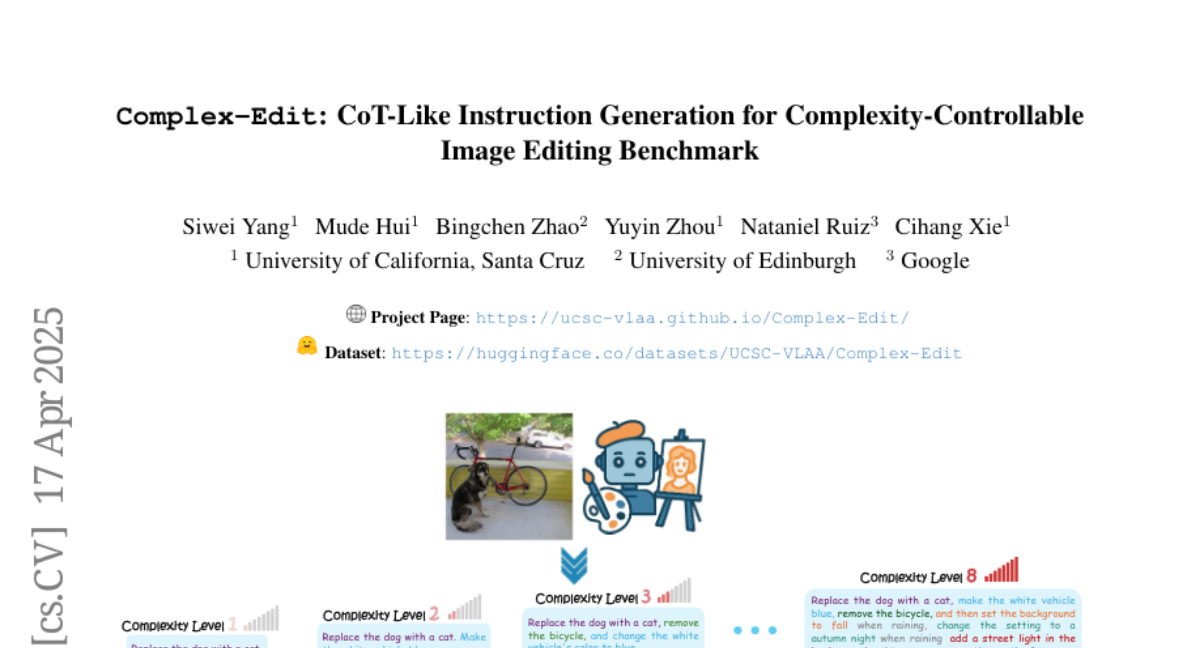

Complex-Edit: CoT-Like Instruction Generation for Complexity-Controllable Image Editing Benchmark

Siwei Yang, Mude Hui, Bingchen Zhao, Yuyin Zhou, Nataniel Ruiz, Cihang Xie

2025-04-18

Summary

This paper talks about Complex-Edit, a new way to test how well AI models can follow instructions to edit images, especially when the instructions range from simple to really complicated.

What's the problem?

The problem is that current tests for image editing AIs don’t really show how well these models handle different levels of instruction complexity, and it’s hard to tell if the models can remember and carry out all the steps when things get tricky. There are also issues with using fake data for training and testing, which can make the results less reliable.

What's the solution?

The researchers created a benchmark using GPT-4o to generate a wide variety of editing instructions, from easy to difficult, and then used this to evaluate how well different AI models perform. This approach makes it easier to spot where the models struggle, whether it’s forgetting parts of the instructions or having trouble with more complex edits.

Why it matters?

This matters because it helps AI developers understand the real strengths and weaknesses of their image editing models, so they can make them better at following detailed instructions and handling more challenging editing tasks. This is important for creative work, design, and any situation where people want to use AI to edit images in specific ways.

Abstract

A benchmark using GPT-4o evaluates instruction-based image editing models across varying complexities, revealing performance gaps, retention issues, and synthetic data challenges.