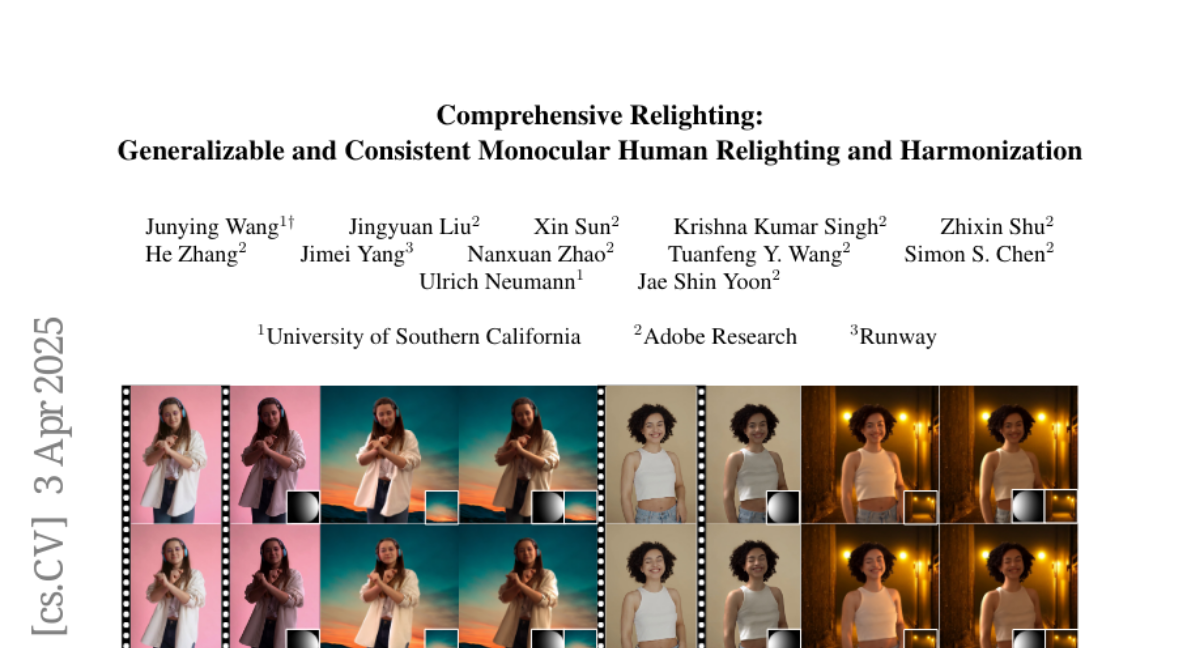

Comprehensive Relighting: Generalizable and Consistent Monocular Human Relighting and Harmonization

Junying Wang, Jingyuan Liu, Xin Sun, Krishna Kumar Singh, Zhixin Shu, He Zhang, Jimei Yang, Nanxuan Zhao, Tuanfeng Y. Wang, Simon S. Chen, Ulrich Neumann, Jae Shin Yoon

2025-04-07

Summary

This paper talks about Comprehensive Relighting, a smart AI tool that changes how people are lit in photos or videos while keeping them looking natural with their surroundings, even when the scene or lighting changes.

What's the problem?

Existing tools can only adjust lighting for specific cases like faces or still poses, and they struggle to keep lighting consistent across different scenes or in videos without looking fake.

What's the solution?

The method uses a smart AI image generator (diffusion model) to adjust lighting step-by-step and adds a special module that learns from real videos to keep lighting smooth over time, all while preserving sharp details.

Why it matters?

This helps filmmakers, game developers, and VR creators realistically adjust lighting in scenes without expensive reshoots or manual editing, saving time and money.

Abstract

This paper introduces Comprehensive Relighting, the first all-in-one approach that can both control and harmonize the lighting from an image or video of humans with arbitrary body parts from any scene. Building such a generalizable model is extremely challenging due to the lack of dataset, restricting existing image-based relighting models to a specific scenario (e.g., face or static human). To address this challenge, we repurpose a pre-trained diffusion model as a general image prior and jointly model the human relighting and background harmonization in the coarse-to-fine framework. To further enhance the temporal coherence of the relighting, we introduce an unsupervised temporal lighting model that learns the lighting cycle consistency from many real-world videos without any ground truth. In inference time, our temporal lighting module is combined with the diffusion models through the spatio-temporal feature blending algorithms without extra training; and we apply a new guided refinement as a post-processing to preserve the high-frequency details from the input image. In the experiments, Comprehensive Relighting shows a strong generalizability and lighting temporal coherence, outperforming existing image-based human relighting and harmonization methods.