Concat-ID: Towards Universal Identity-Preserving Video Synthesis

Yong Zhong, Zhuoyi Yang, Jiayan Teng, Xiaotao Gu, Chongxuan Li

2025-03-19

Summary

This paper is about a new AI system called Concat-ID that can create videos of people while making sure they look like the same person throughout the video, even if you change things like their expression or background.

What's the problem?

It's hard to create AI-generated videos where the person's identity stays consistent, especially when you want to edit the video or put the person in different situations.

What's the solution?

Concat-ID uses a special technique to extract key features from images of the person and then combines those features with the video creation process. This helps the AI maintain the person's identity while still allowing for changes and edits.

Why it matters?

This work is important because it can be used to create realistic and editable videos of people, which could have applications in areas like virtual try-on, video games, and special effects.

Abstract

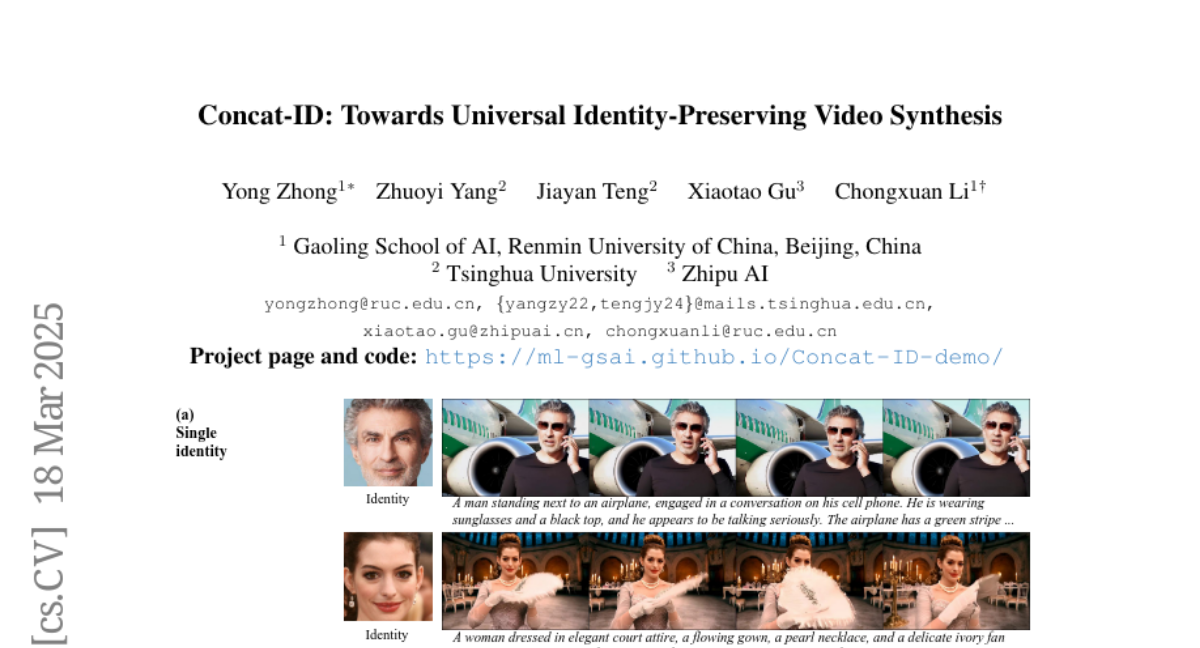

We present Concat-ID, a unified framework for identity-preserving video generation. Concat-ID employs Variational Autoencoders to extract image features, which are concatenated with video latents along the sequence dimension, leveraging solely 3D self-attention mechanisms without the need for additional modules. A novel cross-video pairing strategy and a multi-stage training regimen are introduced to balance identity consistency and facial editability while enhancing video naturalness. Extensive experiments demonstrate Concat-ID's superiority over existing methods in both single and multi-identity generation, as well as its seamless scalability to multi-subject scenarios, including virtual try-on and background-controllable generation. Concat-ID establishes a new benchmark for identity-preserving video synthesis, providing a versatile and scalable solution for a wide range of applications.