Concept Lancet: Image Editing with Compositional Representation Transplant

Jinqi Luo, Tianjiao Ding, Kwan Ho Ryan Chan, Hancheng Min, Chris Callison-Burch, René Vidal

2025-04-08

Summary

This paper talks about Concept Lancet, a smart AI tool that edits images like a surgeon by precisely swapping parts (like hairstyles or objects) without messing up the rest of the picture.

What's the problem?

Current AI image editors struggle to change specific features without either overdoing it (making the image look fake) or underdoing it (not changing enough), requiring lots of trial and error for each image.

What's the solution?

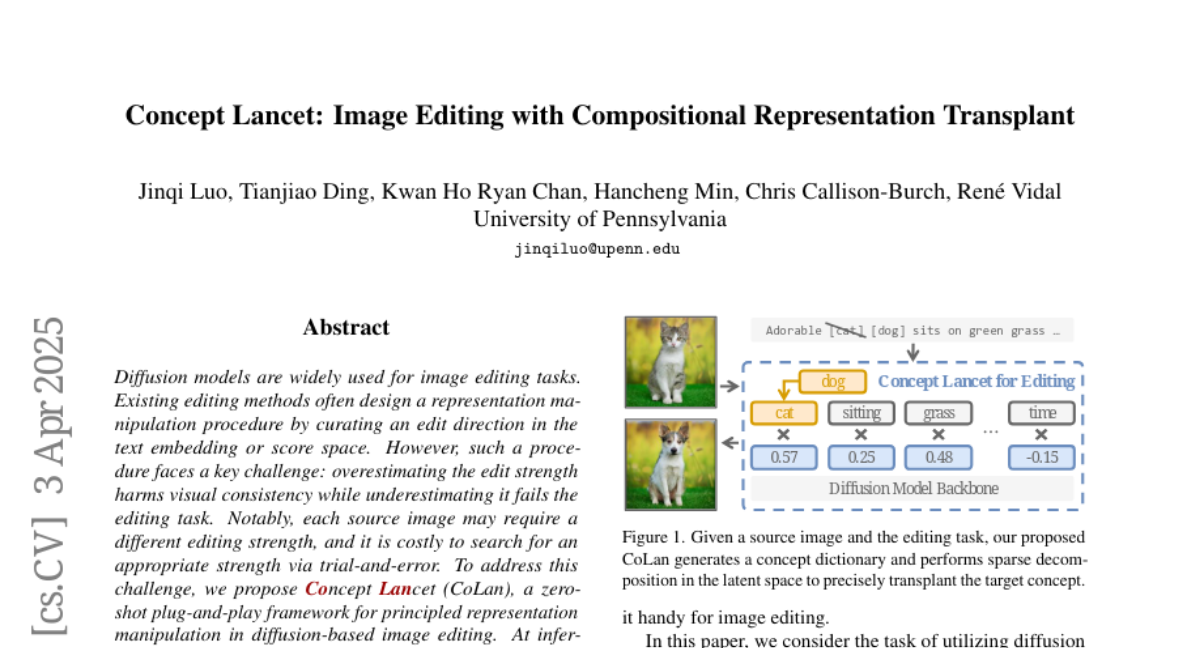

Concept Lancet breaks down images into understandable visual pieces using a huge concept dictionary, then swaps only the needed parts like replacing puzzle pieces, guided by what's actually in the photo.

Why it matters?

This helps artists and designers make precise edits easily, saving time and keeping photos realistic for things like product photos or social media content.

Abstract

Diffusion models are widely used for image editing tasks. Existing editing methods often design a representation manipulation procedure by curating an edit direction in the text embedding or score space. However, such a procedure faces a key challenge: overestimating the edit strength harms visual consistency while underestimating it fails the editing task. Notably, each source image may require a different editing strength, and it is costly to search for an appropriate strength via trial-and-error. To address this challenge, we propose Concept Lancet (CoLan), a zero-shot plug-and-play framework for principled representation manipulation in diffusion-based image editing. At inference time, we decompose the source input in the latent (text embedding or diffusion score) space as a sparse linear combination of the representations of the collected visual concepts. This allows us to accurately estimate the presence of concepts in each image, which informs the edit. Based on the editing task (replace/add/remove), we perform a customized concept transplant process to impose the corresponding editing direction. To sufficiently model the concept space, we curate a conceptual representation dataset, CoLan-150K, which contains diverse descriptions and scenarios of visual terms and phrases for the latent dictionary. Experiments on multiple diffusion-based image editing baselines show that methods equipped with CoLan achieve state-of-the-art performance in editing effectiveness and consistency preservation.