Concept Steerers: Leveraging K-Sparse Autoencoders for Controllable Generations

Dahye Kim, Deepti Ghadiyaram

2025-02-05

Summary

This paper talks about Concept Steerers, a method that uses k-sparse autoencoders (k-SAEs) to control what text-to-image AI models generate. It allows the models to avoid creating unsafe or unethical content, like nudity, and also lets users introduce or adjust specific styles or features in generated images.

What's the problem?

Text-to-image AI models sometimes create harmful or inappropriate content, and fixing this usually requires retraining the models, which is slow and expensive. Current methods can also reduce the quality of the generated images and are not very flexible.

What's the solution?

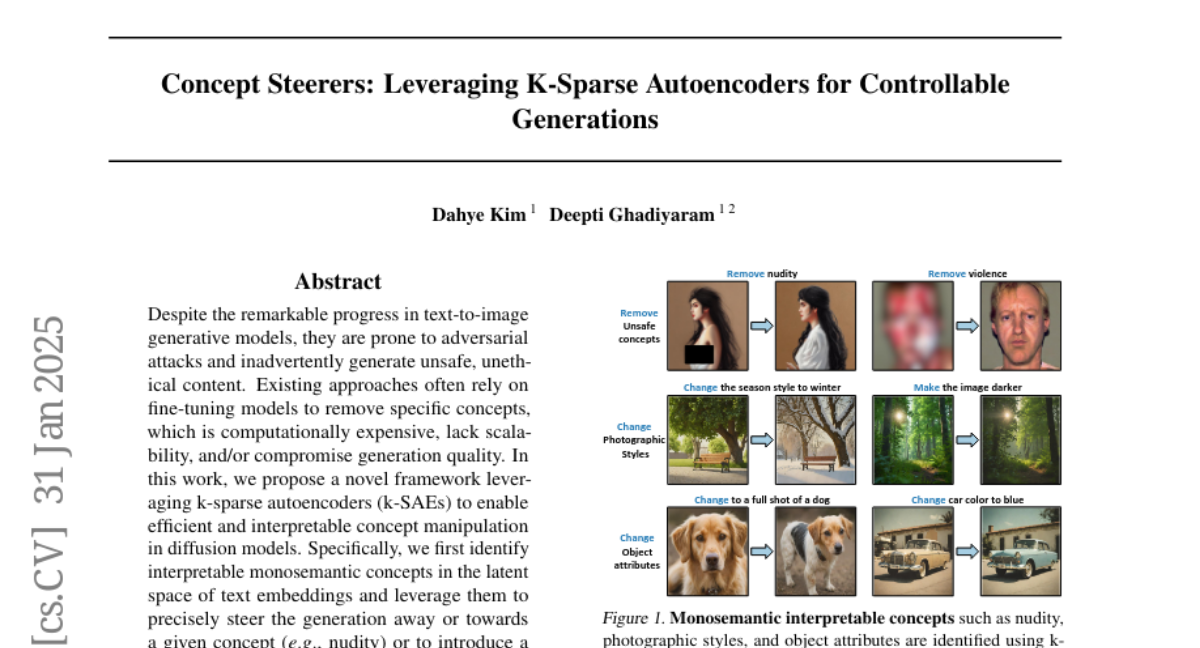

The researchers developed Concept Steerers, which use k-sparse autoencoders to identify specific concepts in the model's memory and steer the image generation process. This approach can block unwanted concepts or add new ones without retraining the base model. It is faster, keeps image quality high, and works well even against tricky prompts designed to bypass safety measures.

Why it matters?

This method is important because it makes text-to-image AI safer and more reliable while still being efficient and creative. It allows for better control over what these models produce, making them more suitable for real-world applications where safety and precision are critical.

Abstract

Despite the remarkable progress in text-to-image generative models, they are prone to adversarial attacks and inadvertently generate unsafe, unethical content. Existing approaches often rely on fine-tuning models to remove specific concepts, which is computationally expensive, lack scalability, and/or compromise generation quality. In this work, we propose a novel framework leveraging k-sparse autoencoders (k-SAEs) to enable efficient and interpretable concept manipulation in diffusion models. Specifically, we first identify interpretable monosemantic concepts in the latent space of text embeddings and leverage them to precisely steer the generation away or towards a given concept (e.g., nudity) or to introduce a new concept (e.g., photographic style). Through extensive experiments, we demonstrate that our approach is very simple, requires no retraining of the base model nor LoRA adapters, does not compromise the generation quality, and is robust to adversarial prompt manipulations. Our method yields an improvement of 20.01% in unsafe concept removal, is effective in style manipulation, and is sim5x faster than current state-of-the-art.