ConsisLoRA: Enhancing Content and Style Consistency for LoRA-based Style Transfer

Bolin Chen, Baoquan Zhao, Haoran Xie, Yi Cai, Qing Li, Xudong Mao

2025-03-14

Summary

This collection of papers explores various advancements and limitations in AI, covering topics like generating images and videos, improving reasoning skills, making models more efficient, and understanding how AI perceives the world.

What's the problem?

The problems addressed range from AI models struggling with consistency in video generation and understanding image transformations, to the challenges of training large language models efficiently and ensuring they can reason effectively across different tasks and modalities.

What's the solution?

The solutions involve developing new training methods, model architectures, and evaluation benchmarks. These include techniques for improving consistency in style transfer (ConsisLoRA), enhancing video generation (CINEMA, Long Context Tuning), making training more efficient (DiLoCo), and improving multimodal reasoning (R1-Onevision). Other solutions focus on understanding the limitations of existing models (Vision Language Models) and creating new tools for analysis (Taxonomy Image Generation Benchmark).

Why it matters?

These advancements are important because they push the boundaries of what AI can do, making it more capable, efficient, and reliable. They pave the way for new applications in areas like content creation, robotics, and scientific research, while also addressing the limitations and biases of current AI systems.

Abstract

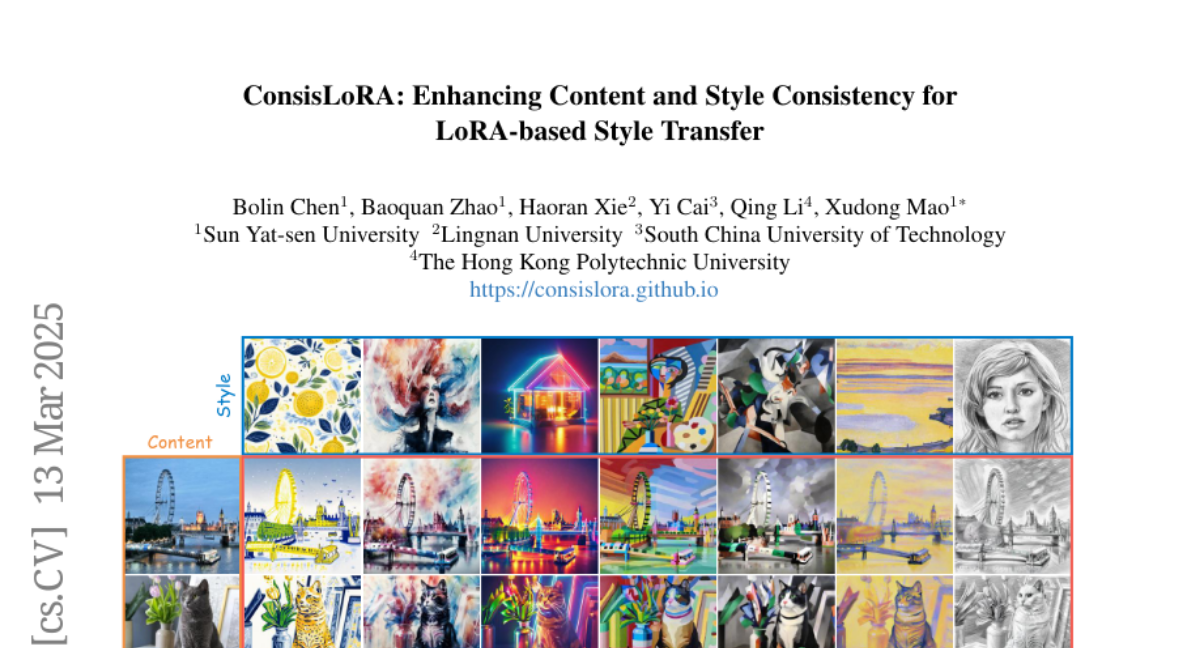

Style transfer involves transferring the style from a reference image to the content of a target image. Recent advancements in LoRA-based (Low-Rank Adaptation) methods have shown promise in effectively capturing the style of a single image. However, these approaches still face significant challenges such as content inconsistency, style misalignment, and content leakage. In this paper, we comprehensively analyze the limitations of the standard diffusion parameterization, which learns to predict noise, in the context of style transfer. To address these issues, we introduce ConsisLoRA, a LoRA-based method that enhances both content and style consistency by optimizing the LoRA weights to predict the original image rather than noise. We also propose a two-step training strategy that decouples the learning of content and style from the reference image. To effectively capture both the global structure and local details of the content image, we introduce a stepwise loss transition strategy. Additionally, we present an inference guidance method that enables continuous control over content and style strengths during inference. Through both qualitative and quantitative evaluations, our method demonstrates significant improvements in content and style consistency while effectively reducing content leakage.