Could Thinking Multilingually Empower LLM Reasoning?

Changjiang Gao, Xu Huang, Wenhao Zhu, Shujian Huang, Lei Li, Fei Yuan

2025-04-21

Summary

This paper talks about whether teaching large language models to think and reason in multiple languages, instead of just English, can actually make them better at solving complicated problems.

What's the problem?

The problem is that most language models are mainly trained and tested in English, so we don’t really know if using other languages could help them think more clearly or come up with better answers, especially for tricky reasoning tasks.

What's the solution?

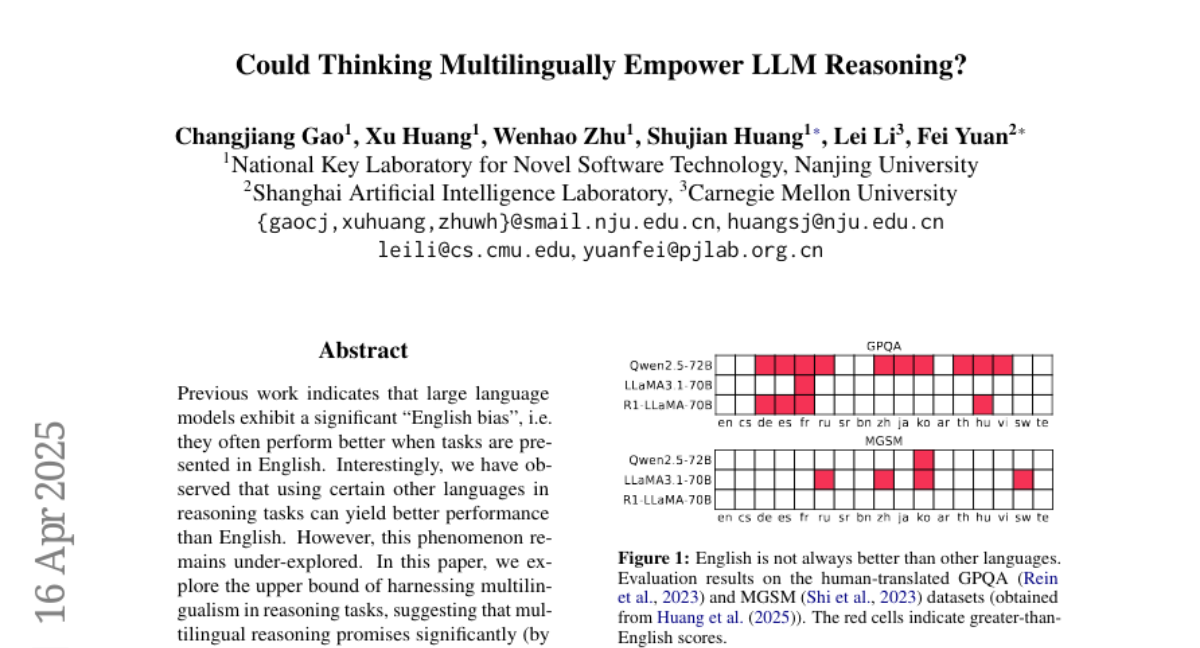

The researchers tested large language models by having them reason using different languages, not just English. They found that when the models used their multilingual skills, they often did better at solving complex problems than when they only used English.

Why it matters?

This matters because it suggests that teaching AI to use multiple languages could make it smarter and more flexible, helping it solve a wider range of problems and making it more useful for people around the world.

Abstract

Multilingual reasoning in large language models shows significant potential for improved performance in reasoning tasks compared to English-only models.