CS-Sum: A Benchmark for Code-Switching Dialogue Summarization and the Limits of Large Language Models

Sathya Krishnan Suresh, Tanmay Surana, Lim Zhi Hao, Eng Siong Chng

2025-05-21

Summary

This paper talks about CS-Sum, a new test to see how well AI models can summarize conversations where people switch between different languages, which is called code-switching.

What's the problem?

The problem is that while large language models seem to do well on regular tests, they often make small but important mistakes when trying to summarize conversations that mix languages, because they haven't been trained enough on this kind of data.

What's the solution?

To address this, the researchers created a special benchmark focused on code-switching dialogues, which helps show where the AI models are struggling and why they need more specific training with mixed-language conversations.

Why it matters?

This matters because lots of people around the world switch between languages when they talk, so making AI better at understanding and summarizing these conversations will help technology work better for everyone.

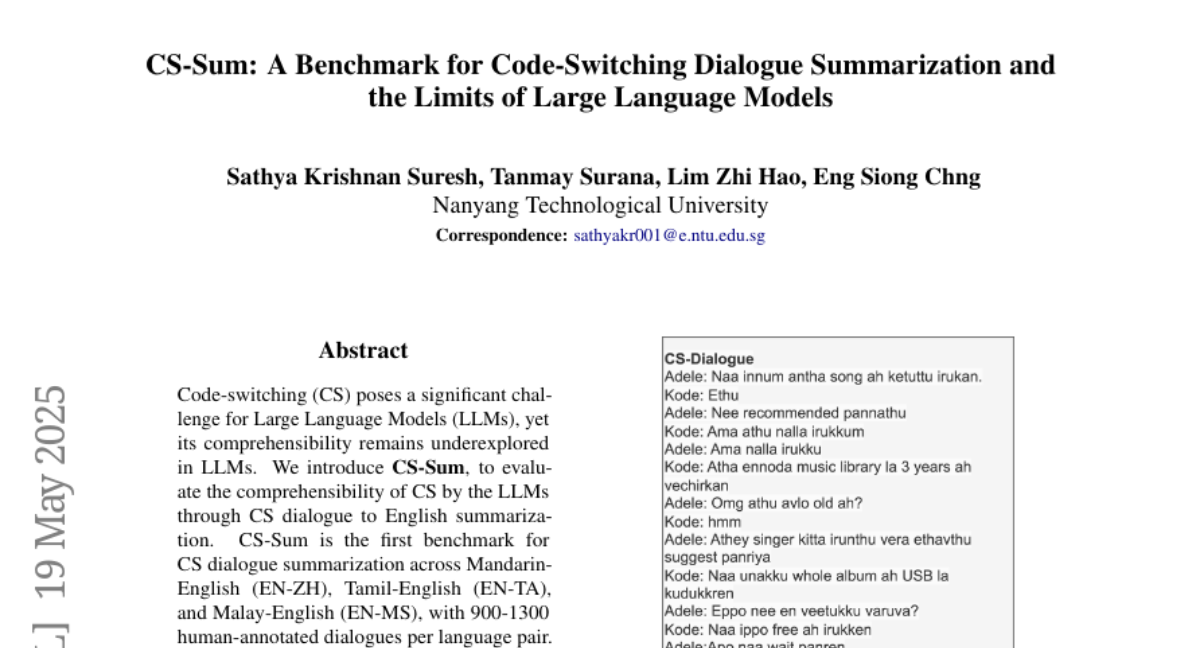

Abstract

LLMs exhibit high automated metric scores but make subtle errors in code-switching dialogue summarization, highlighting the need for specialized training on CS data.