DASH: Detection and Assessment of Systematic Hallucinations of VLMs

Maximilian Augustin, Yannic Neuhaus, Matthias Hein

2025-04-03

Summary

This paper is about finding and fixing a common problem in AI models that use both images and text: they sometimes "hallucinate" objects that aren't really there.

What's the problem?

AI models can make mistakes by saying they see things in images that aren't actually present, but it's hard to test this on a large scale in real-world situations.

What's the solution?

The researchers created a system called DASH that automatically finds these mistakes in AI models by tricking them into hallucinating objects in real-world images. Then, they use this information to fix the models.

Why it matters?

This work matters because it can help make AI models that use images and text more reliable and accurate, which is important for applications like self-driving cars and medical diagnosis.

Abstract

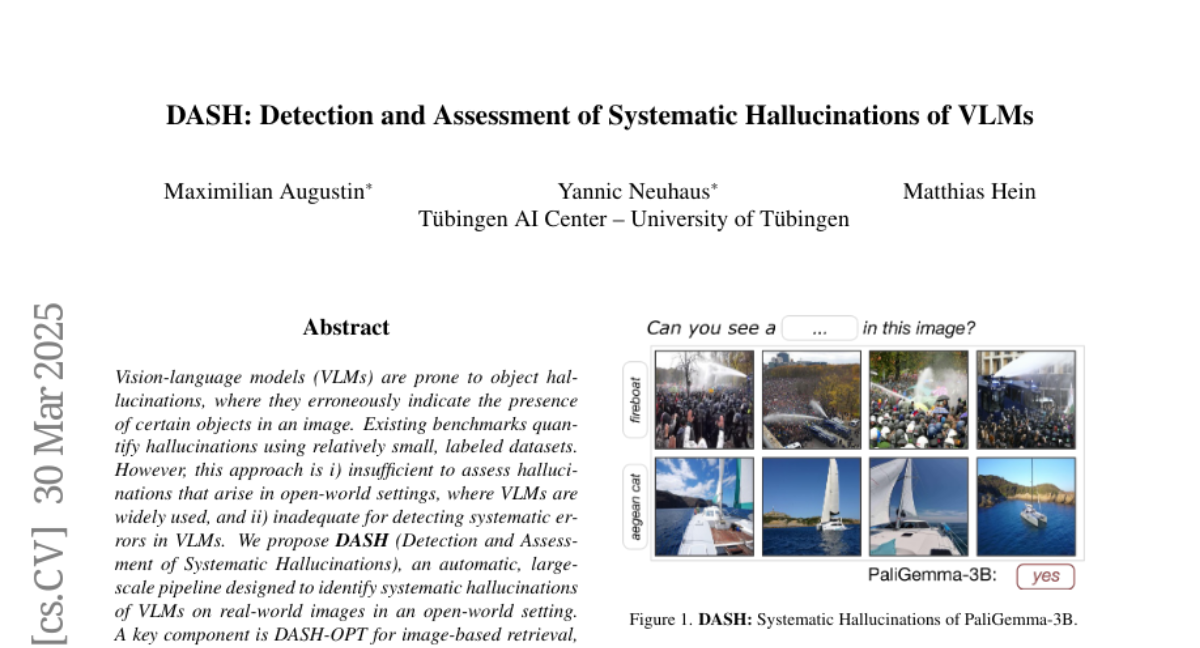

Vision-language models (VLMs) are prone to object hallucinations, where they erroneously indicate the presenceof certain objects in an image. Existing benchmarks quantify hallucinations using relatively small, labeled datasets. However, this approach is i) insufficient to assess hallucinations that arise in open-world settings, where VLMs are widely used, and ii) inadequate for detecting systematic errors in VLMs. We propose DASH (Detection and Assessment of Systematic Hallucinations), an automatic, large-scale pipeline designed to identify systematic hallucinations of VLMs on real-world images in an open-world setting. A key component is DASH-OPT for image-based retrieval, where we optimize over the ''natural image manifold'' to generate images that mislead the VLM. The output of DASH consists of clusters of real and semantically similar images for which the VLM hallucinates an object. We apply DASH to PaliGemma and two LLaVA-NeXT models across 380 object classes and, in total, find more than 19k clusters with 950k images. We study the transfer of the identified systematic hallucinations to other VLMs and show that fine-tuning PaliGemma with the model-specific images obtained with DASH mitigates object hallucinations. Code and data are available at https://YanNeu.github.io/DASH.