Date Fragments: A Hidden Bottleneck of Tokenization for Temporal Reasoning

Gagan Bhatia, Maxime Peyrard, Wei Zhao

2025-05-23

Summary

This paper talks about how the way AI breaks up dates into pieces, called tokenization, can actually make it harder for the AI to understand and reason about time and dates.

What's the problem?

The problem is that when language models read dates, they often split them into smaller parts, which can confuse the model and make it less accurate when answering questions that involve time or dates.

What's the solution?

The researchers created new tests called DateAugBench to show how this splitting of dates affects the AI's performance. They found that bigger models are better at handling this problem, but it still creates a challenge for accurate temporal reasoning.

Why it matters?

This matters because understanding dates and time is important for many tasks, like planning, scheduling, or answering questions about history, so fixing this issue can make AI models much more reliable in real-world situations.

Abstract

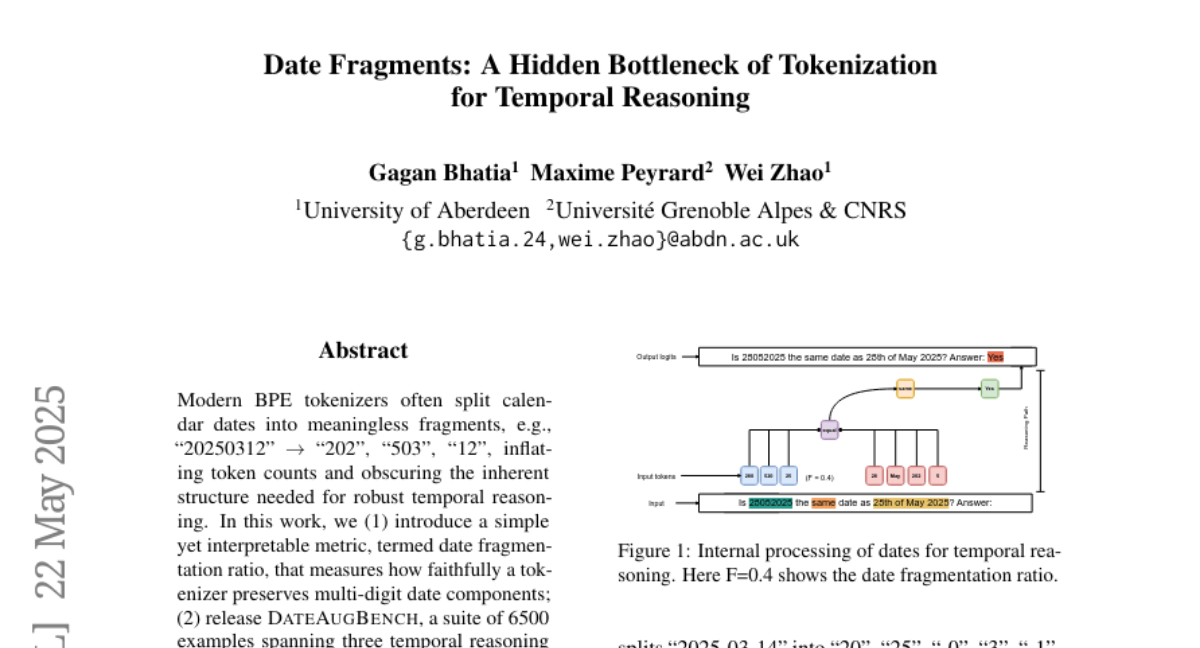

New DateAugBench benchmarks reveal how modern tokenizers fragment dates, impacting the accuracy of temporal reasoning in large language models, which compensate for fragmentation more effectively as they grow larger.