DateLogicQA: Benchmarking Temporal Biases in Large Language Models

Gagan Bhatia, MingZe Tang, Cristina Mahanta, Madiha Kazi

2024-12-20

Summary

This paper talks about DateLogicQA, a new benchmark designed to test how well large language models (LLMs) can understand and reason about dates in different formats and contexts. It includes 190 questions that challenge these models in various ways.

What's the problem?

Understanding and reasoning about dates can be complicated for AI models. Existing methods often struggle with biases that affect how they interpret and respond to questions about time, which can lead to incorrect or inconsistent answers. There is a need for a structured way to evaluate these capabilities in LLMs.

What's the solution?

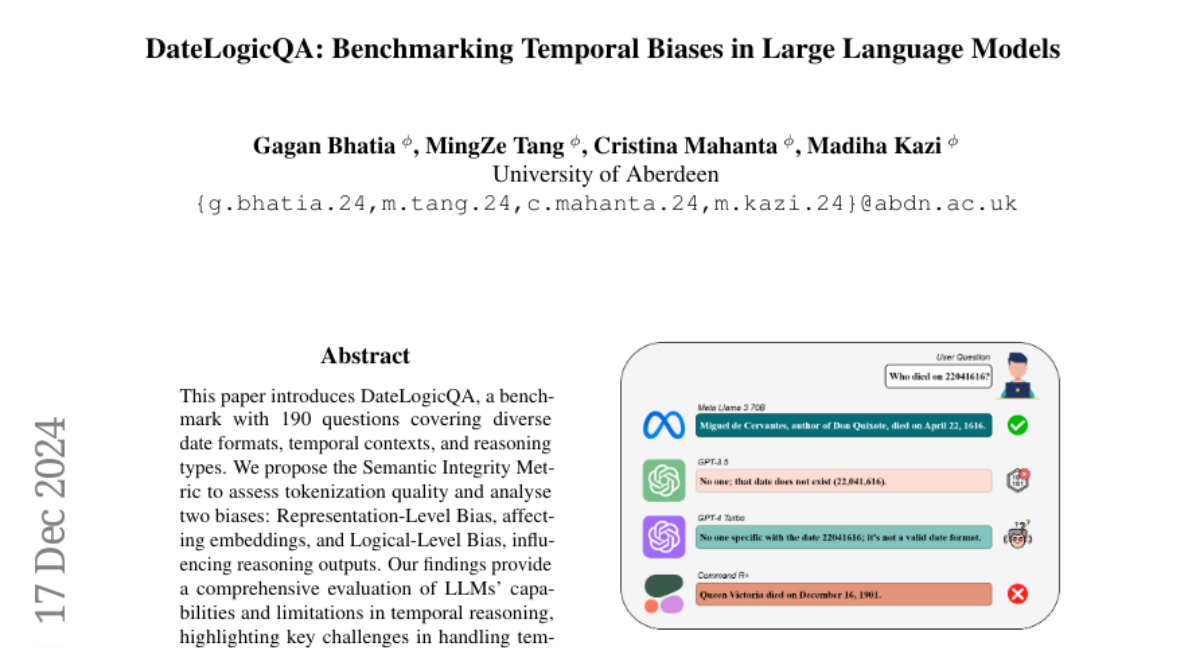

DateLogicQA addresses this problem by providing a dataset of questions that cover different date formats (like 'January 1, 2020' vs. '01/01/20') and temporal contexts (past, present, future). It introduces the Semantic Integrity Metric to assess how well the models tokenize and understand dates. The research also analyzes two specific biases: Representation-Level Bias, which affects how dates are represented in the model, and Logical-Level Bias, which influences how the model reasons about those dates.

Why it matters?

This research is important because it helps identify the strengths and weaknesses of AI models when dealing with temporal reasoning. By understanding these biases, developers can work on improving AI systems, making them more reliable for tasks that involve understanding time-related information, which is crucial in many real-world applications like scheduling, historical analysis, and event planning.

Abstract

This paper introduces DateLogicQA, a benchmark with 190 questions covering diverse date formats, temporal contexts, and reasoning types. We propose the Semantic Integrity Metric to assess tokenization quality and analyse two biases: Representation-Level Bias, affecting embeddings, and Logical-Level Bias, influencing reasoning outputs. Our findings provide a comprehensive evaluation of LLMs' capabilities and limitations in temporal reasoning, highlighting key challenges in handling temporal data accurately. The GitHub repository for our work is available at https://github.com/gagan3012/EAIS-Temporal-Bias