DeCLIP: Decoupled Learning for Open-Vocabulary Dense Perception

Junjie Wang, Bin Chen, Yulin Li, Bin Kang, Yichi Chen, Zhuotao Tian

2025-05-15

Summary

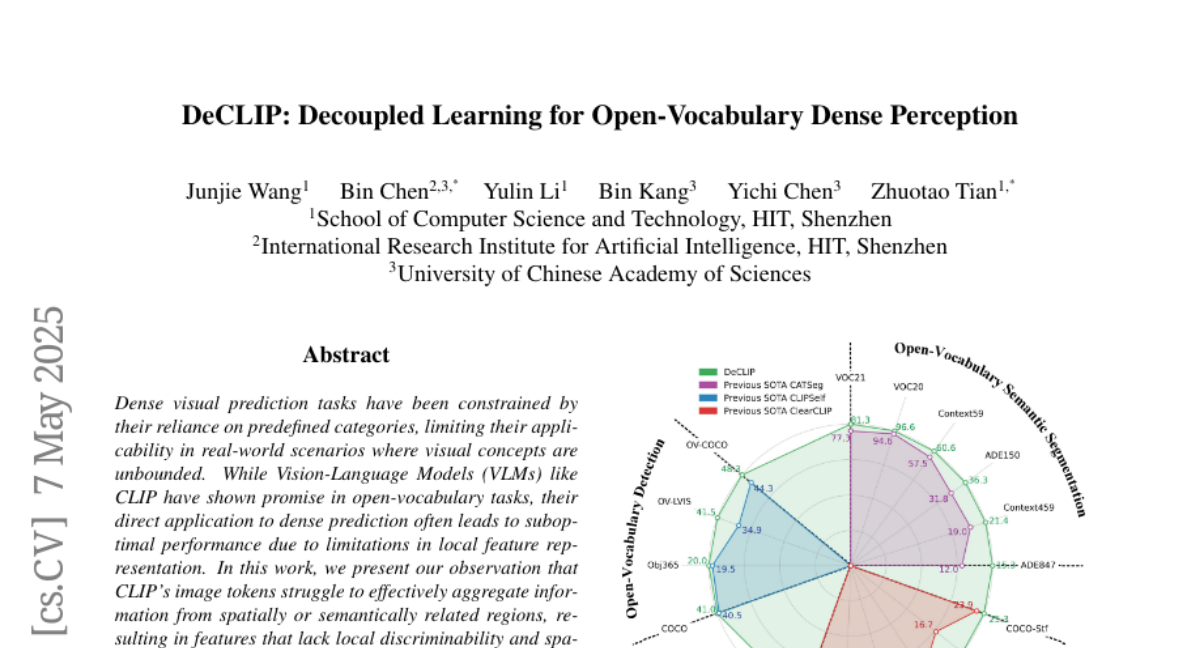

This paper talks about DeCLIP, a new approach that improves how AI can recognize and describe many different objects in images, even if it hasn't seen them before, by teaching the model to separate what something is from where it is or how it appears in the picture.

What's the problem?

The problem is that current AI models, like CLIP, sometimes get confused when trying to identify and label lots of different things in detailed images, especially when the objects are new or appear in unusual places or situations.

What's the solution?

The researchers improved the model by splitting the learning process into two parts: one part focuses on understanding the actual content (what the object is), and the other part focuses on the context (where it is or what's around it). This makes the model much better at tasks like finding and naming objects or understanding the meaning of different areas in an image, even with objects it hasn't seen before.

Why it matters?

This matters because it helps AI systems become more accurate and flexible when analyzing real-world images, which is useful for things like self-driving cars, medical imaging, and any technology that needs to understand complex scenes.

Abstract

DeCLIP enhances CLIP by separating content and context features, improving performance on open-vocabulary dense prediction tasks like object detection and semantic segmentation.