Decomposing MLP Activations into Interpretable Features via Semi-Nonnegative Matrix Factorization

Or Shafran, Atticus Geiger, Mor Geva

2025-06-15

Summary

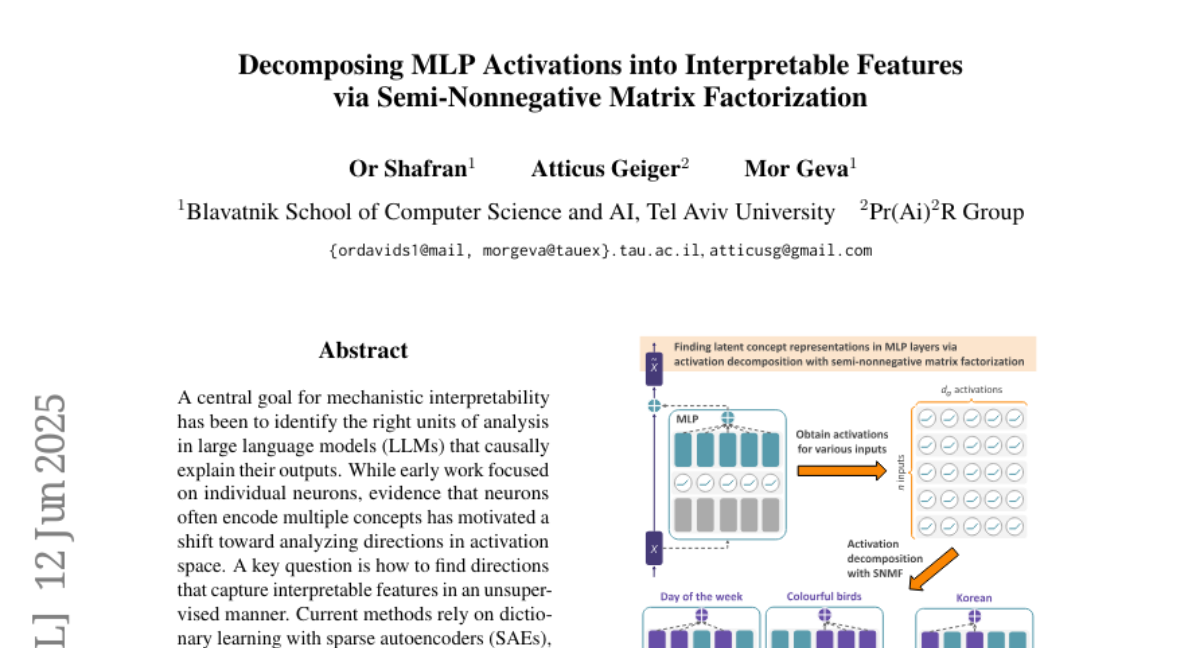

This paper talks about using Semi-Nonnegative Matrix Factorization, or SNMF, to break down the activations inside big language models, specifically in their multilayer perceptrons (MLPs), into understandable features. Instead of using complicated or less clear methods, SNMF helps find parts of the model’s internal activity that line up with concepts humans can interpret, making it easier to understand what the model is really focusing on when it works.

What's the problem?

The problem is that when AI models like large language models make decisions, their internal processes are very complex and hard to interpret. Existing methods for uncovering meaningful features from the model’s activations often don’t align well with concepts humans understand or need lots of extra supervision, making it tough to explain how the model thinks.

What's the solution?

The solution was to apply SNMF directly to the model’s MLP activations, which allows the method to extract clear and interpretable features without requiring extensive supervision. This approach works better than previous methods like sparse autoencoders or supervised methods by focusing on nonnegative components, which makes the features more meaningful and closely connected to human-understandable concepts. It also performs well in tests measuring how well these features causally explain the model’s behaviour.

Why it matters?

This matters because understanding what parts of an AI model correspond to meaningful concepts helps researchers and developers make AI systems more transparent and trustworthy. By using SNMF to get interpretable features, it becomes easier to explain, debug, and improve AI models, which is important for safely deploying AI in real-world applications.

Abstract

SNMF is used to identify interpretable features in LLMs by directly decomposing MLP activations, outperforming SAEs and supervised methods in causal evaluations and aligning with human-interpretable concepts.