Decompositional Neural Scene Reconstruction with Generative Diffusion Prior

Junfeng Ni, Yu Liu, Ruijie Lu, Zirui Zhou, Song-Chun Zhu, Yixin Chen, Siyuan Huang

2025-03-20

Summary

This paper talks about how to make 3D models of scenes, like rooms, by breaking them down into individual objects and using AI to fill in the missing details.

What's the problem?

Creating 3D models of scenes is hard, especially when you only have a few pictures to work with, because some parts of the scene might be hidden or unclear.

What's the solution?

The researchers used a technique called DP-Recon, which uses AI to guess what the missing parts of the scene look like and then combines those guesses with the information from the pictures.

Why it matters?

This work matters because it can make it easier to create realistic 3D models of scenes, which can be used in video games, movies, and other applications.

Abstract

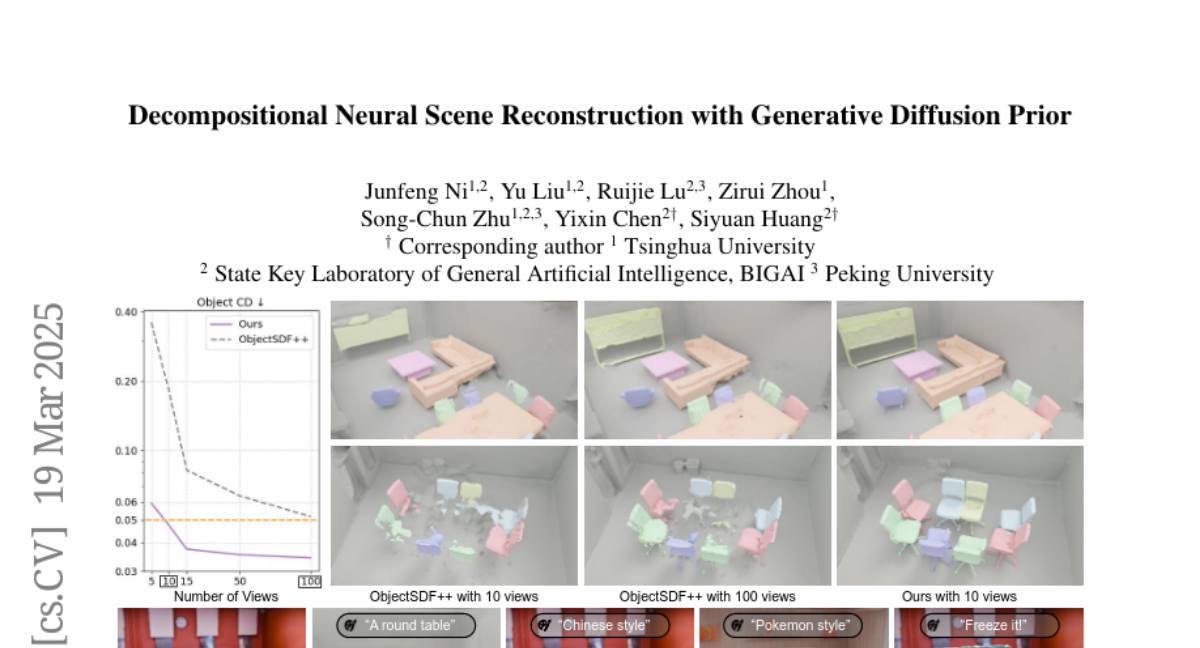

Decompositional reconstruction of 3D scenes, with complete shapes and detailed texture of all objects within, is intriguing for downstream applications but remains challenging, particularly with sparse views as input. Recent approaches incorporate semantic or geometric regularization to address this issue, but they suffer significant degradation in underconstrained areas and fail to recover occluded regions. We argue that the key to solving this problem lies in supplementing missing information for these areas. To this end, we propose DP-Recon, which employs diffusion priors in the form of Score Distillation Sampling (SDS) to optimize the neural representation of each individual object under novel views. This provides additional information for the underconstrained areas, but directly incorporating diffusion prior raises potential conflicts between the reconstruction and generative guidance. Therefore, we further introduce a visibility-guided approach to dynamically adjust the per-pixel SDS loss weights. Together these components enhance both geometry and appearance recovery while remaining faithful to input images. Extensive experiments across Replica and ScanNet++ demonstrate that our method significantly outperforms SOTA methods. Notably, it achieves better object reconstruction under 10 views than the baselines under 100 views. Our method enables seamless text-based editing for geometry and appearance through SDS optimization and produces decomposed object meshes with detailed UV maps that support photorealistic Visual effects (VFX) editing. The project page is available at https://dp-recon.github.io/.