Decoupled Global-Local Alignment for Improving Compositional Understanding

Xiaoxing Hu, Kaicheng Yang, Jun Wang, Haoran Xu, Ziyong Feng, Yupei Wang

2025-04-24

Summary

This paper talks about DeGLA, a new system that helps AI models better understand complicated images and their descriptions by teaching them to connect both the big picture and the small details between what they see and what they read.

What's the problem?

The problem is that current vision-language models often struggle with 'compositional understanding,' which means they have trouble recognizing how different parts of an image and its description fit together, especially when the scene is complex or unusual.

What's the solution?

The researchers created DeGLA, which separates the process of matching the overall image with the overall text (global alignment) from matching smaller parts of the image with specific words or phrases (local alignment). They also used techniques like self-distillation and special training losses to make the model learn these connections more deeply. This approach made the model much better at understanding and classifying new images it had never seen before.

Why it matters?

This matters because it means AI can now do a better job at understanding and describing real-world images, even in situations it hasn't been trained on directly. This improvement helps with things like image search, automatic descriptions for the visually impaired, and smarter digital assistants.

Abstract

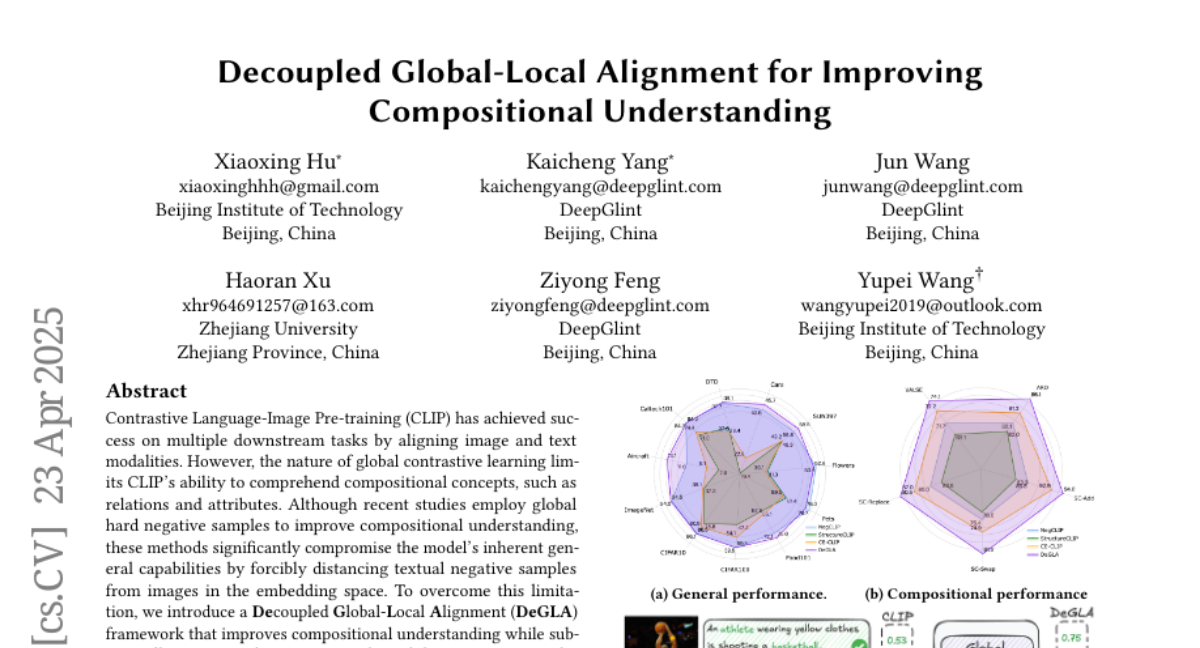

The DeGLA framework enhances compositional understanding in vision-language models through decoupled global-local alignment, self-distillation, and image-text grounded contrastive losses, improving performance on benchmarks and zero-shot classification tasks.