DeepSeek vs. o3-mini: How Well can Reasoning LLMs Evaluate MT and Summarization?

Daniil Larionov, Sotaro Takeshita, Ran Zhang, Yanran Chen, Christoph Leiter, Zhipin Wang, Christian Greisinger, Steffen Eger

2025-04-15

Summary

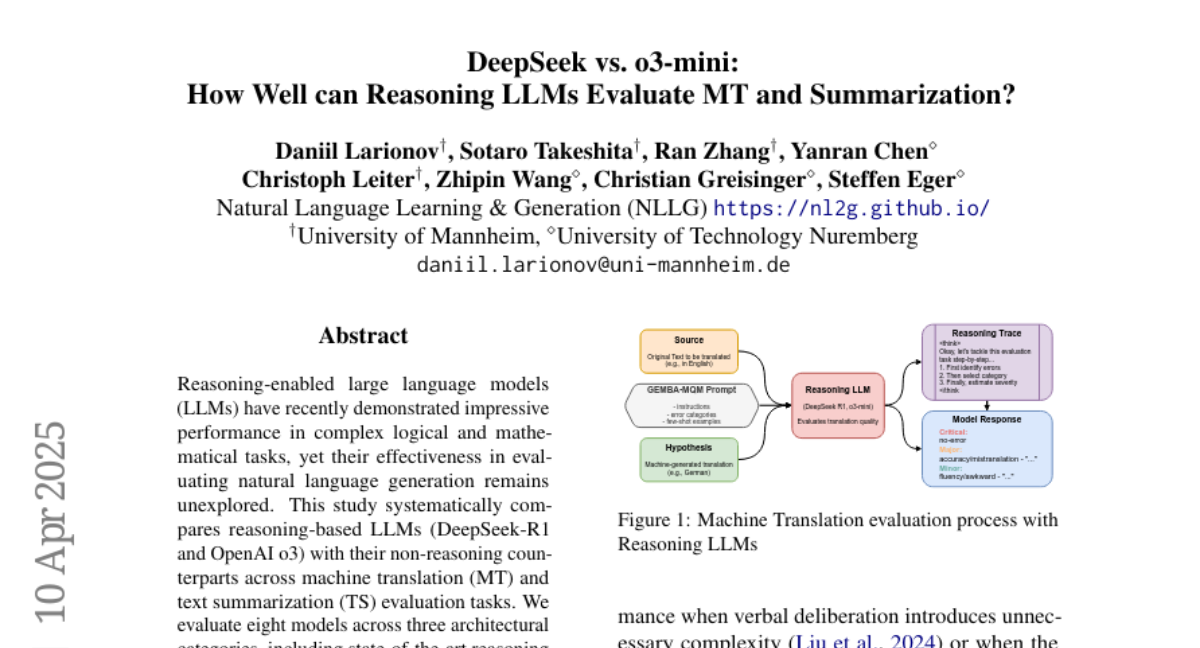

This paper compares two advanced AI models, DeepSeek and o3-mini, to see how well they can evaluate machine translation (MT) and summarization tasks, focusing on their reasoning abilities.

What's the problem?

The problem is that even though reasoning-enabled large language models (LLMs) are supposed to be better at understanding and evaluating complex language tasks, their actual performance varies a lot depending on the specific model and the type of task. It's unclear if these improvements are consistent or significant when it comes to evaluating things like translations or summaries.

What's the solution?

The paper tests and analyzes the performance of DeepSeek and o3-mini on MT and summarization tasks, measuring how well each model reasons through these challenges. It looks at technical aspects like speed, memory use, and how efficiently they handle large amounts of information. DeepSeek is found to be better at complex reasoning tasks, while o3-mini is faster and more consistent for routine tasks. The study also discusses their different architectures and features, like DeepSeek’s ability to handle complex debugging and o3-mini’s quick response times and lower resource needs.

Why it matters?

Understanding the strengths and weaknesses of these reasoning-enabled LLMs matters because it helps researchers and developers choose the right tool for specific language tasks. It also shows that just adding reasoning abilities to LLMs doesn’t guarantee better performance in every situation, so careful evaluation is needed before deciding which model to use for evaluating translations or summaries.

Abstract

Reasoning-enabled LLMs show mixed performance improvements over non-reasoning models in natural language generation tasks, with performance varying by model and task.