Delineate Anything: Resolution-Agnostic Field Boundary Delineation on Satellite Imagery

Mykola Lavreniuk, Nataliia Kussul, Andrii Shelestov, Bohdan Yailymov, Yevhenii Salii, Volodymyr Kuzin, Zoltan Szantoi

2025-04-07

Summary

This paper talks about Delineate Anything, a smart AI tool that automatically draws accurate farm field borders on satellite photos, even when fields are small, irregular, or in different countries.

What's the problem?

Current methods struggle to map farm fields accurately because they use small datasets, can't handle different image qualities, and fail in various weather or farming conditions.

What's the solution?

The team built a huge training dataset with millions of field examples from many countries and created an AI model that learns to spot fields in any satellite image, regardless of image quality or location.

Why it matters?

This helps farmers and governments track crops better, manage land use, and plan farming policies more effectively using up-to-date field maps.

Abstract

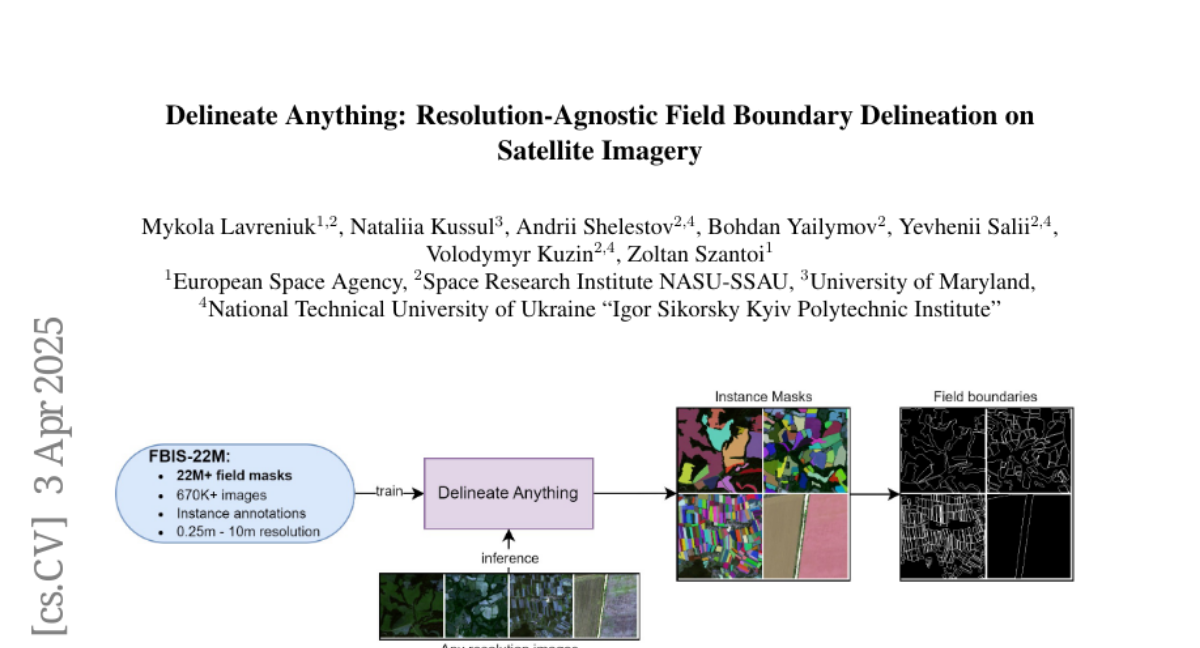

The accurate delineation of agricultural field boundaries from satellite imagery is vital for land management and crop monitoring. However, current methods face challenges due to limited dataset sizes, resolution discrepancies, and diverse environmental conditions. We address this by reformulating the task as instance segmentation and introducing the Field Boundary Instance Segmentation - 22M dataset (FBIS-22M), a large-scale, multi-resolution dataset comprising 672,909 high-resolution satellite image patches (ranging from 0.25 m to 10 m) and 22,926,427 instance masks of individual fields, significantly narrowing the gap between agricultural datasets and those in other computer vision domains. We further propose Delineate Anything, an instance segmentation model trained on our new FBIS-22M dataset. Our proposed model sets a new state-of-the-art, achieving a substantial improvement of 88.5% in mAP@0.5 and 103% in mAP@0.5:0.95 over existing methods, while also demonstrating significantly faster inference and strong zero-shot generalization across diverse image resolutions and unseen geographic regions. Code, pre-trained models, and the FBIS-22M dataset are available at https://lavreniuk.github.io/Delineate-Anything.