DepthLab: From Partial to Complete

Zhiheng Liu, Ka Leong Cheng, Qiuyu Wang, Shuzhe Wang, Hao Ouyang, Bin Tan, Kai Zhu, Yujun Shen, Qifeng Chen, Ping Luo

2024-12-25

Summary

This paper talks about DepthLab, a new model designed to fill in missing depth information in images, which is important for creating accurate 3D representations from partial data.

What's the problem?

In many applications, depth data can be incomplete due to various reasons like poor data collection or changes in perspective. This missing information can make it hard for systems to accurately understand and recreate 3D scenes, leading to errors in applications like virtual reality or autonomous driving.

What's the solution?

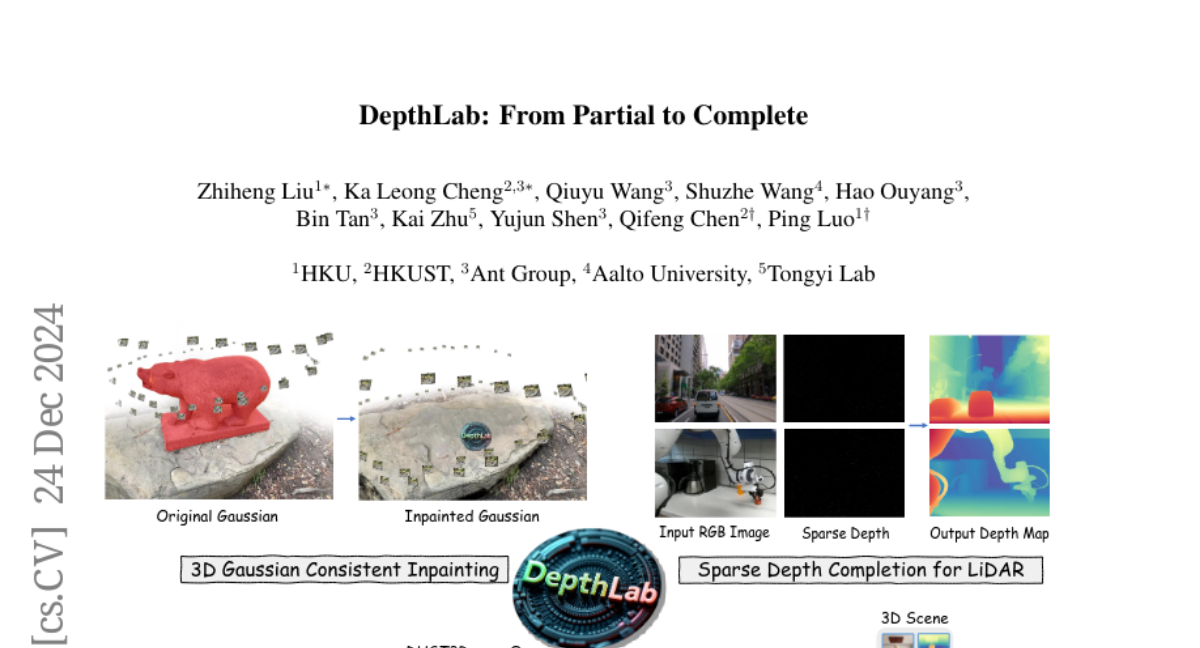

To solve this problem, the authors developed DepthLab, a model that uses advanced techniques to fill in missing depth values. It has two main strengths: it can effectively complete both large areas and small isolated points of missing depth, and it maintains consistent scale with the known depth data. DepthLab uses image diffusion priors to enhance its performance and has been tested in various tasks such as 3D scene reconstruction and generating scenes from text descriptions. The results show that DepthLab outperforms existing methods in both accuracy and visual quality.

Why it matters?

This research is important because it improves how machines understand and recreate 3D environments from incomplete data. By providing a reliable way to fill in missing depth information, DepthLab can enhance technologies that rely on accurate 3D modeling, making it useful for fields like gaming, robotics, and augmented reality.

Abstract

Missing values remain a common challenge for depth data across its wide range of applications, stemming from various causes like incomplete data acquisition and perspective alteration. This work bridges this gap with DepthLab, a foundation depth inpainting model powered by image diffusion priors. Our model features two notable strengths: (1) it demonstrates resilience to depth-deficient regions, providing reliable completion for both continuous areas and isolated points, and (2) it faithfully preserves scale consistency with the conditioned known depth when filling in missing values. Drawing on these advantages, our approach proves its worth in various downstream tasks, including 3D scene inpainting, text-to-3D scene generation, sparse-view reconstruction with DUST3R, and LiDAR depth completion, exceeding current solutions in both numerical performance and visual quality. Our project page with source code is available at https://johanan528.github.io/depthlab_web/.