Describe Anything: Detailed Localized Image and Video Captioning

Long Lian, Yifan Ding, Yunhao Ge, Sifei Liu, Hanzi Mao, Boyi Li, Marco Pavone, Ming-Yu Liu, Trevor Darrell, Adam Yala, Yin Cui

2025-04-23

Summary

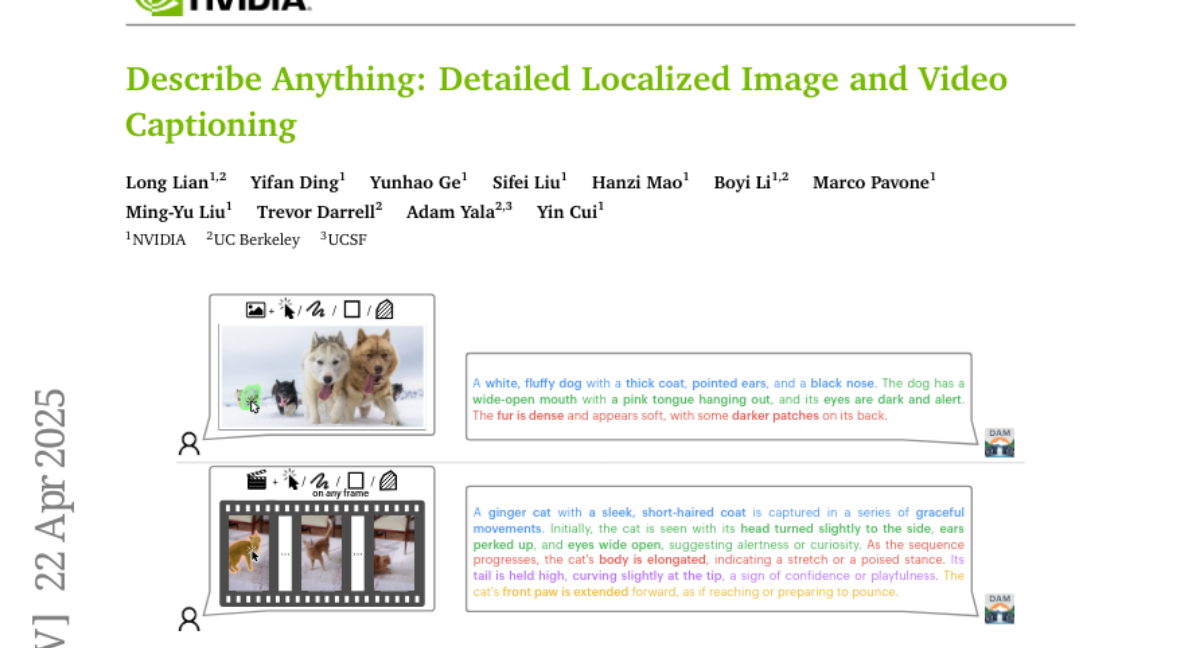

This paper talks about the Describe Anything Model, or DAM, which is a new AI system that can look at pictures and videos and give really detailed descriptions about specific parts of them.

What's the problem?

The problem is that most captioning models can only give general descriptions of what's in an image or video, and they struggle to talk about smaller or specific details, especially when there are lots of things happening at once.

What's the solution?

The researchers created DAM, which uses a special way of focusing on certain areas in images and videos, combined with a smart vision system, to generate captions that are much more detailed and precise. They also used a semi-supervised data process to help the model learn from lots of examples, even when not all of them are perfectly labeled.

Why it matters?

This matters because it helps people and computers understand images and videos on a much deeper level, which is useful for things like helping visually impaired people, organizing digital media, and improving search and security systems.

Abstract

The Describe Anything Model (DAM) leverages a focal prompt and localized vision backbone to achieve detailed localized captioning, outperforming existing models on various benchmarks through a semi-supervised data pipeline.