DiffPortrait360: Consistent Portrait Diffusion for 360 View Synthesis

Yuming Gu, Phong Tran, Yujian Zheng, Hongyi Xu, Heyuan Li, Adilbek Karmanov, Hao Li

2025-03-26

Summary

This paper is about creating AI that can generate realistic 360-degree views of people's heads from just a single photo, even if they have different styles or accessories.

What's the problem?

Existing AI can generate 3D heads, but they might not look very realistic or be able to handle different styles. Other AI can create stylish head images, but only from the front, not in 360 degrees.

What's the solution?

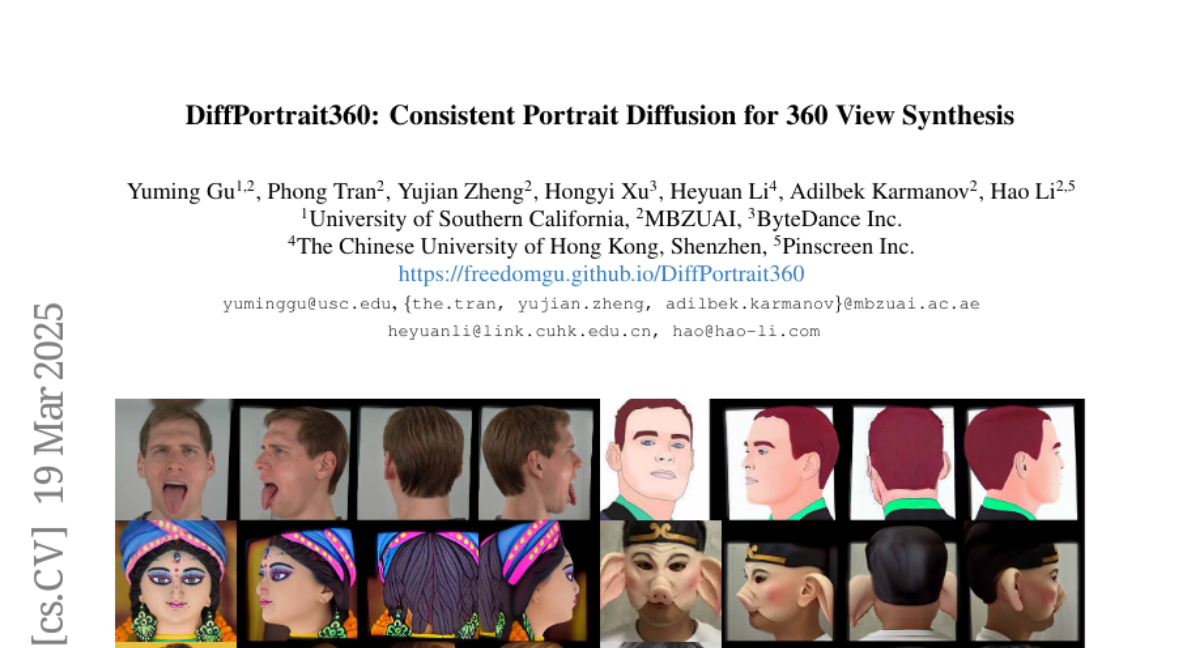

The researchers developed a new method that combines the best of both worlds: it can create realistic-looking 360-degree head models that can also handle different styles and accessories, like glasses or hats.

Why it matters?

This work matters because it can make it easier to create personalized avatars and 3D models of people, which could be useful for things like video conferencing, virtual reality, and creating personalized content.

Abstract

Generating high-quality 360-degree views of human heads from single-view images is essential for enabling accessible immersive telepresence applications and scalable personalized content creation. While cutting-edge methods for full head generation are limited to modeling realistic human heads, the latest diffusion-based approaches for style-omniscient head synthesis can produce only frontal views and struggle with view consistency, preventing their conversion into true 3D models for rendering from arbitrary angles. We introduce a novel approach that generates fully consistent 360-degree head views, accommodating human, stylized, and anthropomorphic forms, including accessories like glasses and hats. Our method builds on the DiffPortrait3D framework, incorporating a custom ControlNet for back-of-head detail generation and a dual appearance module to ensure global front-back consistency. By training on continuous view sequences and integrating a back reference image, our approach achieves robust, locally continuous view synthesis. Our model can be used to produce high-quality neural radiance fields (NeRFs) for real-time, free-viewpoint rendering, outperforming state-of-the-art methods in object synthesis and 360-degree head generation for very challenging input portraits.