Diffusion vs. Autoregressive Language Models: A Text Embedding Perspective

Siyue Zhang, Yilun Zhao, Liyuan Geng, Arman Cohan, Anh Tuan Luu, Chen Zhao

2025-05-22

Summary

This paper talks about comparing two different types of AI models—diffusion models and autoregressive models—to see which one does a better job at understanding and finding text information.

What's the problem?

When searching for information in lots of text, it's important for AI to have a strong way of representing what different pieces of text mean, but not all models are equally good at this, especially when it comes to finding the right answers quickly and accurately.

What's the solution?

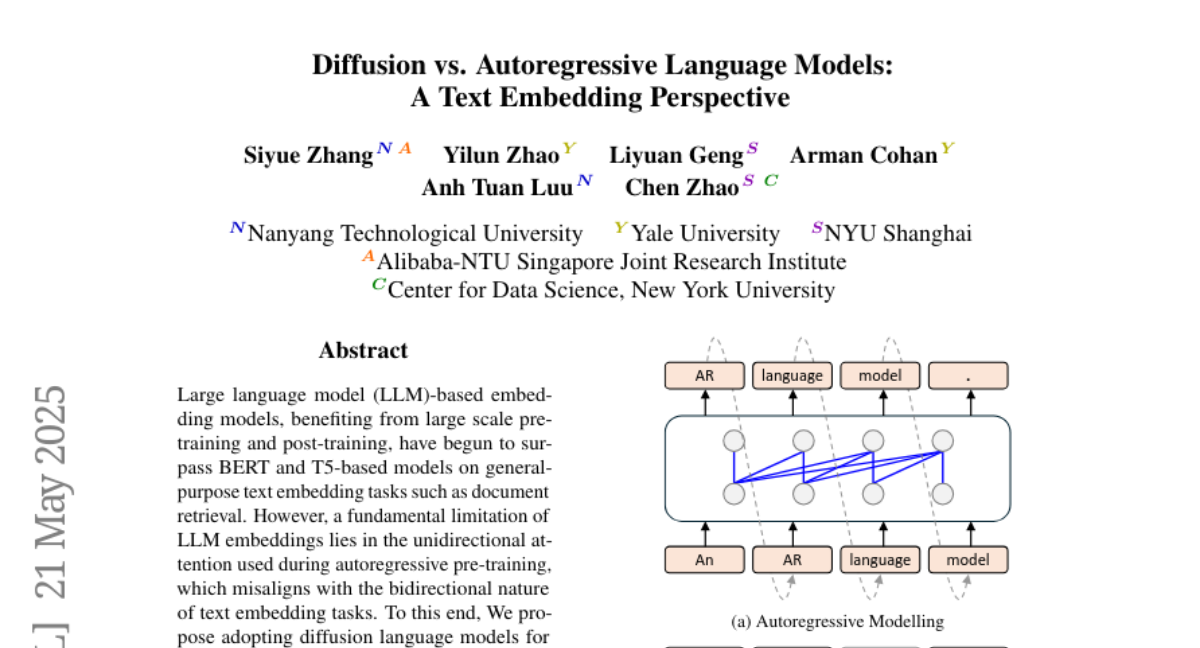

The researchers found that diffusion language models, which can look at text in both directions at once, are better at creating useful text representations than traditional models that only read in one direction, making them more effective for tasks like text retrieval.

Why it matters?

This matters because it means we can build smarter search engines and information systems that help people find what they need faster and more accurately, which is useful for everything from homework to research.

Abstract

Diffusion language models outperform large language model embeddings in text retrieval tasks due to their bidirectional architecture.