DiffusionBlocks: Blockwise Training for Generative Models via Score-Based Diffusion

Makoto Shing, Takuya Akiba

2025-06-17

Summary

This paper talks about DiffusionBlocks, a new way to train big AI models by breaking the neural network into smaller parts called blocks. Each block is trained separately to remove noise step-by-step in a process called diffusion. This method uses less computer memory but still performs well on tasks like making images or understanding language.

What's the problem?

The problem is that training large neural networks all at once uses too much computer memory, which makes it hard for many people or machines to do the training. Traditional methods require all parts of the network to be trained together, causing big memory demands.

What's the solution?

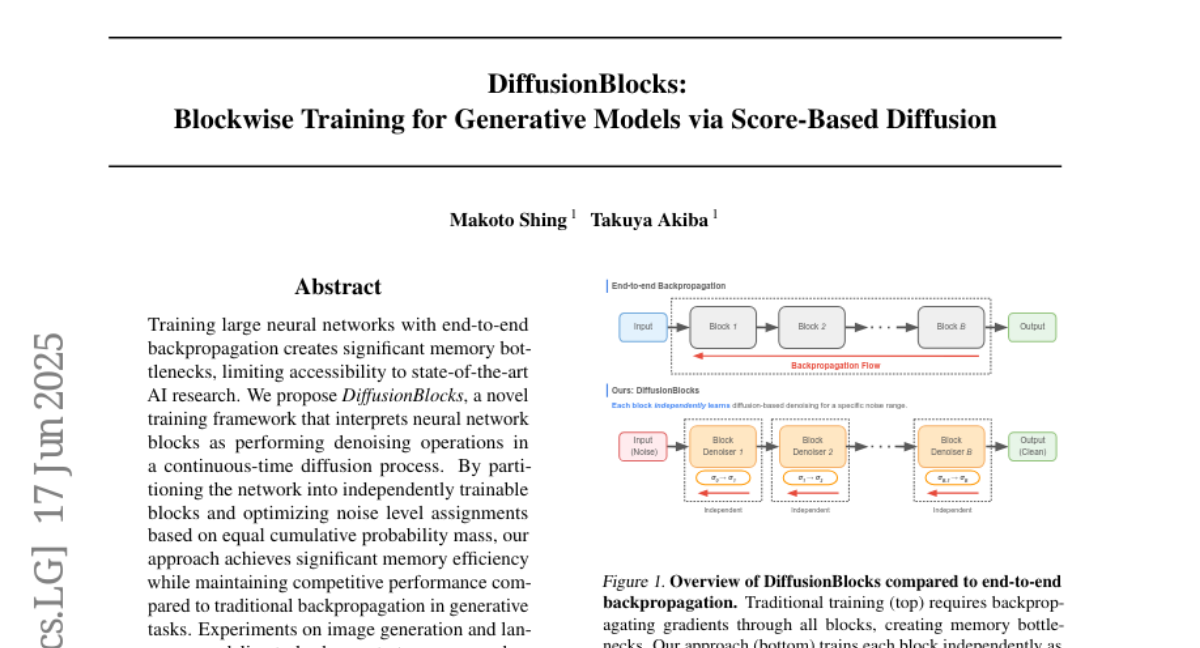

The solution DiffusionBlocks provides is to split the network into independent blocks, each responsible for cleaning up noise at certain levels during the generation process. By training each block on its own without needing information from other blocks at the same time, this approach saves memory and makes training more efficient. The paper also uses a smart way to assign noise levels to blocks so that each block faces a balanced learning task.

Why it matters?

This matters because it makes training large AI models more accessible and less expensive by reducing the memory needed. It allows researchers and developers with limited resources to work on powerful generative models, advancing AI in making images, language, and more, and potentially speeding up how fast these models can be trained and used.

Abstract

A novel training framework called DiffusionBlocks optimizes neural network blocks as denoising operations in a diffusion process, achieving memory efficiency and competitive performance in generative tasks.