Disentangle Identity, Cooperate Emotion: Correlation-Aware Emotional Talking Portrait Generation

Weipeng Tan, Chuming Lin, Chengming Xu, FeiFan Xu, Xiaobin Hu, Xiaozhong Ji, Junwei Zhu, Chengjie Wang, Yanwei Fu

2025-04-30

Summary

This paper talks about DICE-Talk, a new system that makes animated talking portraits look more realistic by showing emotions that match what is being said, while still keeping the person's unique appearance.

What's the problem?

Most animated talking heads either lose the person's identity when trying to show emotions, or the emotions look fake and don't match the speech, making the animation less believable.

What's the solution?

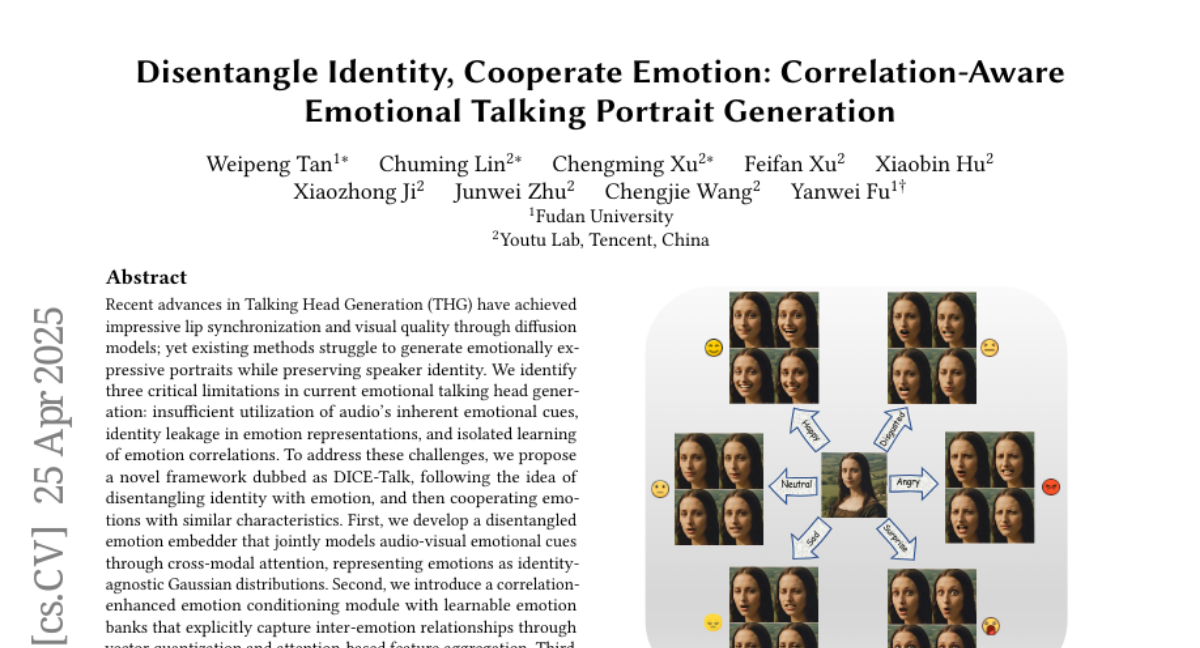

The researchers designed DICE-Talk to separate a person's identity from their emotions, so the system can add the right emotional expressions without changing how the person looks. It also uses advanced AI to make sure the emotions fit naturally with the words being spoken.

Why it matters?

This matters because it makes digital avatars and virtual characters much more lifelike and expressive, which is important for things like video calls, movies, and online education.

Abstract

A novel framework, DICE-Talk, improves emotional expression in talking head generation by disentangling identity and emotion, capturing emotion correlations, and enforcing affective consistency through diffusion models.