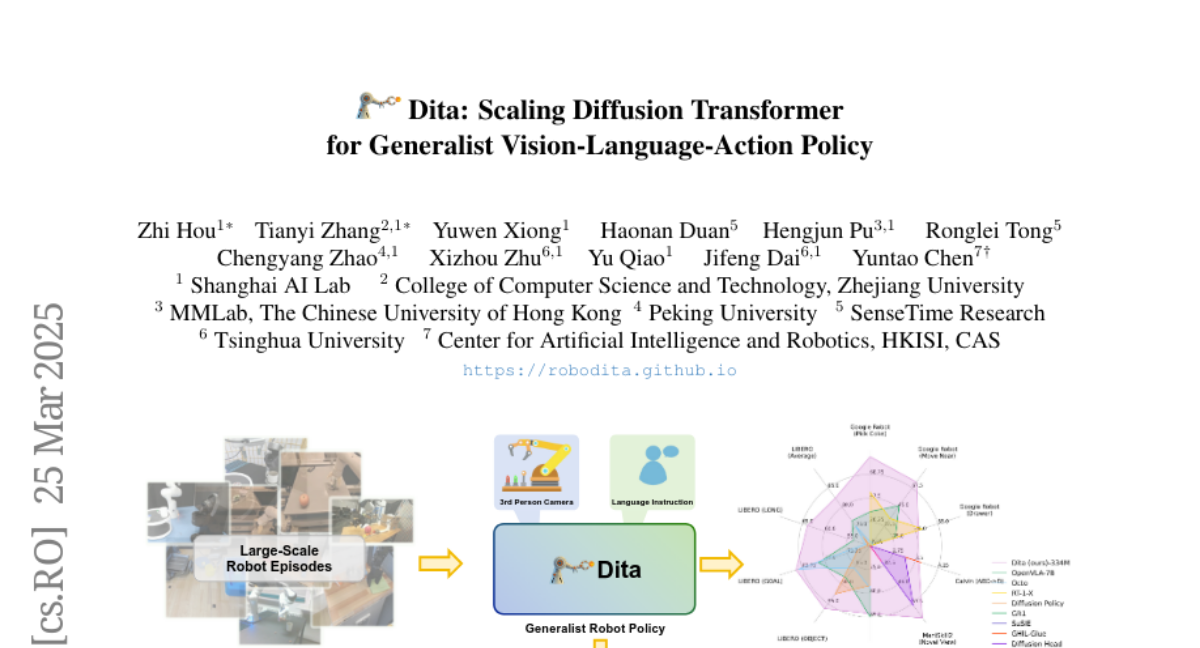

Dita: Scaling Diffusion Transformer for Generalist Vision-Language-Action Policy

Zhi Hou, Tianyi Zhang, Yuwen Xiong, Haonan Duan, Hengjun Pu, Ronglei Tong, Chengyang Zhao, Xizhou Zhu, Yu Qiao, Jifeng Dai, Yuntao Chen

2025-03-27

Summary

This paper is about creating a versatile AI model called Dita that can control robots to perform different tasks by understanding both what the robot sees and what it's told to do.

What's the problem?

Existing AI models for controlling robots are often limited to specific tasks and struggle to adapt to new situations or different types of robots.

What's the solution?

Dita uses a new approach that allows it to learn from a variety of robot datasets and directly control the robot's actions, making it more adaptable and capable of performing complex tasks.

Why it matters?

This work matters because it can lead to more general-purpose robots that can be easily trained to perform a wide range of tasks in different environments.

Abstract

While recent vision-language-action models trained on diverse robot datasets exhibit promising generalization capabilities with limited in-domain data, their reliance on compact action heads to predict discretized or continuous actions constrains adaptability to heterogeneous action spaces. We present Dita, a scalable framework that leverages Transformer architectures to directly denoise continuous action sequences through a unified multimodal diffusion process. Departing from prior methods that condition denoising on fused embeddings via shallow networks, Dita employs in-context conditioning -- enabling fine-grained alignment between denoised actions and raw visual tokens from historical observations. This design explicitly models action deltas and environmental nuances. By scaling the diffusion action denoiser alongside the Transformer's scalability, Dita effectively integrates cross-embodiment datasets across diverse camera perspectives, observation scenes, tasks, and action spaces. Such synergy enhances robustness against various variances and facilitates the successful execution of long-horizon tasks. Evaluations across extensive benchmarks demonstrate state-of-the-art or comparative performance in simulation. Notably, Dita achieves robust real-world adaptation to environmental variances and complex long-horizon tasks through 10-shot finetuning, using only third-person camera inputs. The architecture establishes a versatile, lightweight and open-source baseline for generalist robot policy learning. Project Page: https://robodita.github.io.