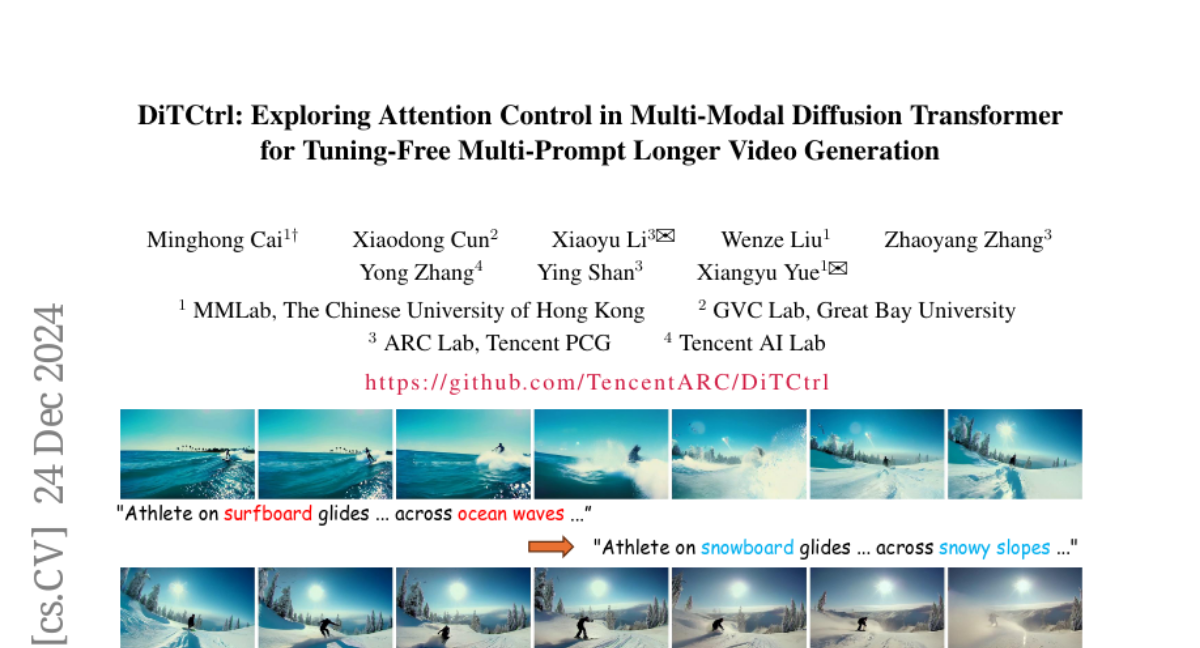

DiTCtrl: Exploring Attention Control in Multi-Modal Diffusion Transformer for Tuning-Free Multi-Prompt Longer Video Generation

Minghong Cai, Xiaodong Cun, Xiaoyu Li, Wenze Liu, Zhaoyang Zhang, Yong Zhang, Ying Shan, Xiangyu Yue

2024-12-25

Summary

This paper talks about DiTCtrl, a new method for generating videos that can handle multiple prompts at once, making it easier to create coherent and dynamic scenes without needing extra training.

What's the problem?

Most current video generation models focus on single prompts and struggle to create smooth scenes when given multiple prompts in sequence. This limitation can lead to unnatural transitions and poor continuity in the generated videos, making them less realistic and harder to follow.

What's the solution?

To solve this issue, the authors developed DiTCtrl, which treats multi-prompt video generation like editing a video over time. They analyzed how the attention mechanism in their model works and found ways to use it to guide the generation process better. By allowing the model to share information across different prompts, DiTCtrl generates videos with smooth transitions and consistent motion without needing additional training. They also created a new benchmark called MPVBench to evaluate this multi-prompt video generation effectively.

Why it matters?

This research is important because it pushes the boundaries of what AI can do in video generation. By enabling models to handle multiple prompts more effectively, DiTCtrl can improve applications in areas like filmmaking, gaming, and virtual reality, where creating realistic and engaging videos is crucial.

Abstract

Sora-like video generation models have achieved remarkable progress with a Multi-Modal Diffusion Transformer MM-DiT architecture. However, the current video generation models predominantly focus on single-prompt, struggling to generate coherent scenes with multiple sequential prompts that better reflect real-world dynamic scenarios. While some pioneering works have explored multi-prompt video generation, they face significant challenges including strict training data requirements, weak prompt following, and unnatural transitions. To address these problems, we propose DiTCtrl, a training-free multi-prompt video generation method under MM-DiT architectures for the first time. Our key idea is to take the multi-prompt video generation task as temporal video editing with smooth transitions. To achieve this goal, we first analyze MM-DiT's attention mechanism, finding that the 3D full attention behaves similarly to that of the cross/self-attention blocks in the UNet-like diffusion models, enabling mask-guided precise semantic control across different prompts with attention sharing for multi-prompt video generation. Based on our careful design, the video generated by DiTCtrl achieves smooth transitions and consistent object motion given multiple sequential prompts without additional training. Besides, we also present MPVBench, a new benchmark specially designed for multi-prompt video generation to evaluate the performance of multi-prompt generation. Extensive experiments demonstrate that our method achieves state-of-the-art performance without additional training.