Do PhD-level LLMs Truly Grasp Elementary Addition? Probing Rule Learning vs. Memorization in Large Language Models

Yang Yan, Yu Lu, Renjun Xu, Zhenzhong Lan

2025-04-14

Summary

This paper talks about whether super advanced language models, even those trained at a PhD level, actually understand basic math like addition or if they’re just memorizing answers. The researchers tested these models to see if they really get how addition works, or if they only do well on problems they've seen before.

What's the problem?

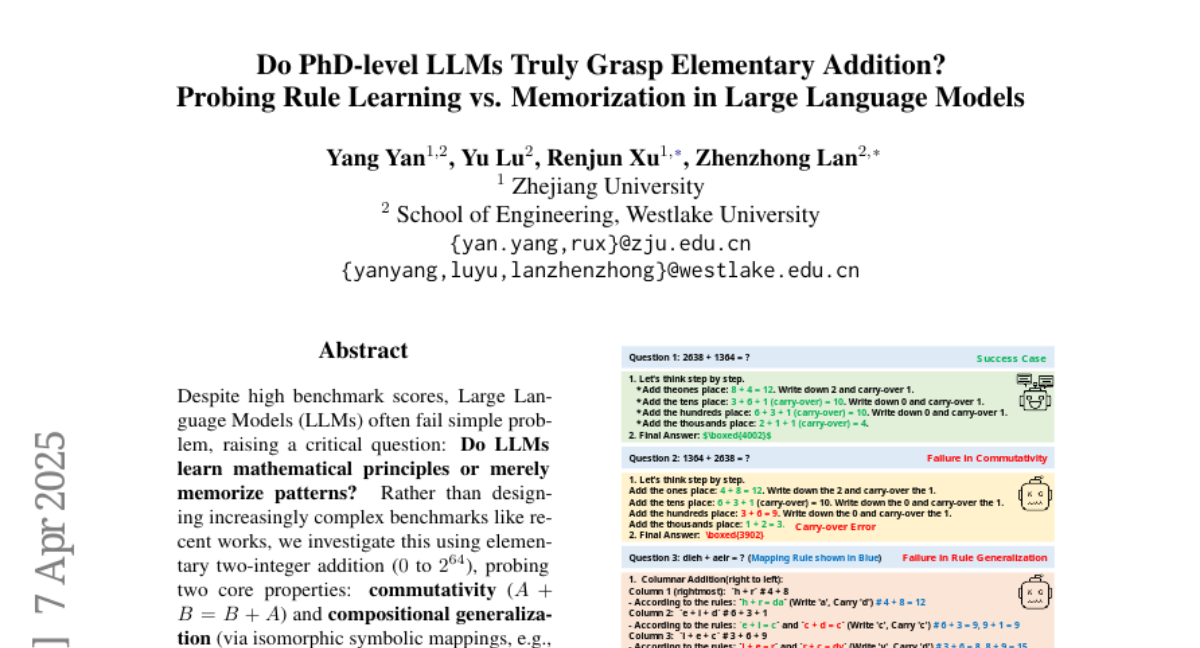

The problem is that while these large language models can give correct answers to many addition problems, it’s not clear if they truly understand the rules of math or if they’re just repeating what they’ve memorized from their training data. This becomes an issue when the models face new types of math problems or need to apply math rules, like commutativity, which says that the order of numbers in addition doesn’t matter.

What's the solution?

The researchers designed tests to check if the models could handle symbolic math problems and if they could apply math rules like commutativity, instead of just recalling answers. They found that while the models were good at solving standard addition problems, they struggled with symbolic tasks and often failed when the order of numbers was switched, showing that the models rely more on memorization than on actually understanding math.

Why it matters?

This work matters because it shows that even the most advanced language models might not really understand basic math concepts, which is important if we want to trust them for tasks that involve real reasoning. Knowing these limits helps researchers improve AI and teaches us to be careful about relying on AI for things that require true understanding.

Abstract

LLMs achieve high accuracy in numerical addition but fail to generalize to symbolic mappings and exhibit poor performance in commutativity, suggesting they rely on memorization rather than understanding mathematical principles.