DreamActor-H1: High-Fidelity Human-Product Demonstration Video Generation via Motion-designed Diffusion Transformers

Lizhen Wang, Zhurong Xia, Tianshu Hu, Pengrui Wang, Pengfei Wang, Zerong Zheng, Ming Zhou

2025-06-15

Summary

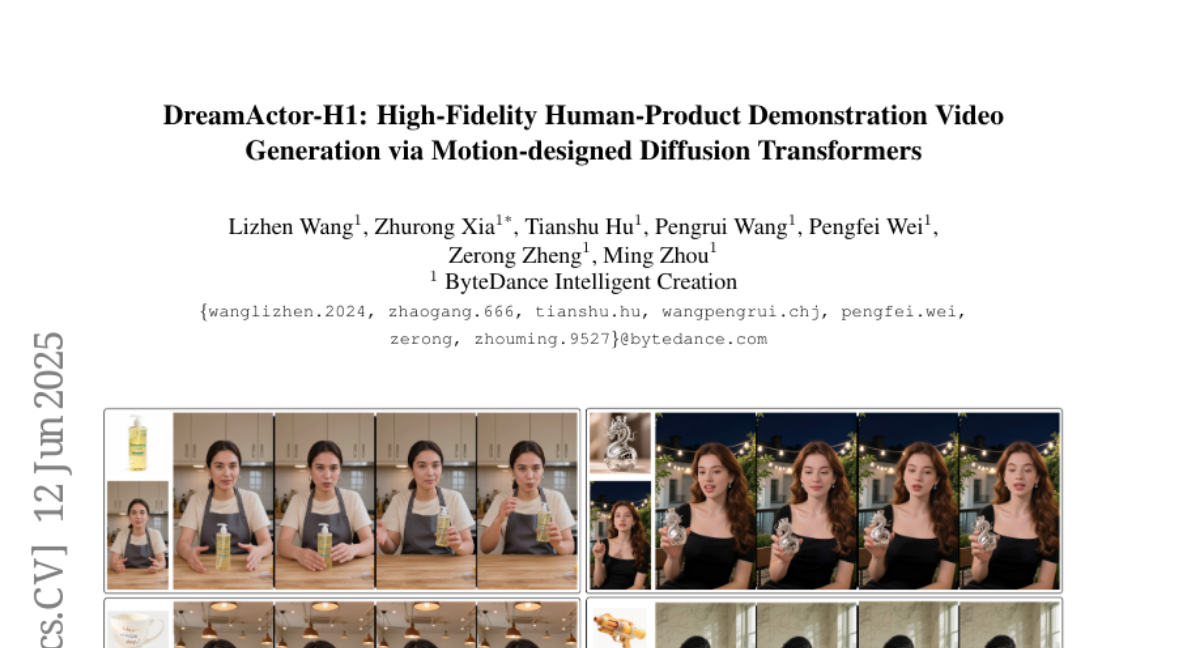

This paper talks about DreamActor-H1, a new AI system that uses a type of technology called diffusion transformers to create very realistic videos where a human shows how to use a product. The system makes sure that the person in the video looks consistent throughout and that the product stays in the right place relative to the person.

What's the problem?

The problem is that making videos where a human is demonstrating a product is very hard for AI because it has to keep the person's identity the same in every frame and also keep the correct spatial relationship between the person and the product, so the video looks natural and believable.

What's the solution?

The solution was to design a framework using diffusion transformers that focus on both the human and the product. It uses a method called masked cross-attention to pay attention to important parts selectively, and structured text encoding that helps the AI understand both the person and the product details to generate high-quality, consistent videos.

Why it matters?

This matters because creating high-quality demonstration videos automatically can save a lot of time and money in advertising, training, and online shopping. It helps businesses and creators showcase products in a realistic way without needing real actors or complicated filming.

Abstract

A Diffusion Transformer-based framework generates high-fidelity human-product demonstration videos by preserving identities and spatial relationships, using masked cross-attention and structured text encoding.