DreamActor-M1: Holistic, Expressive and Robust Human Image Animation with Hybrid Guidance

Yuxuan Luo, Zhengkun Rong, Lizhen Wang, Longhao Zhang, Tianshu Hu, Yongming Zhu

2025-04-03

Summary

This paper is about creating a better way to make AI-generated videos of people that look realistic and can be easily controlled.

What's the problem?

Current AI models struggle to create human animations that are expressive, adaptable to different scales, and consistent over long periods of time.

What's the solution?

The researchers developed DreamActor-M1, a system that uses a combination of different techniques to control facial expressions, body movements, and overall appearance, resulting in more realistic and controllable animations.

Why it matters?

This work matters because it can lead to more realistic and engaging AI-generated videos of people.

Abstract

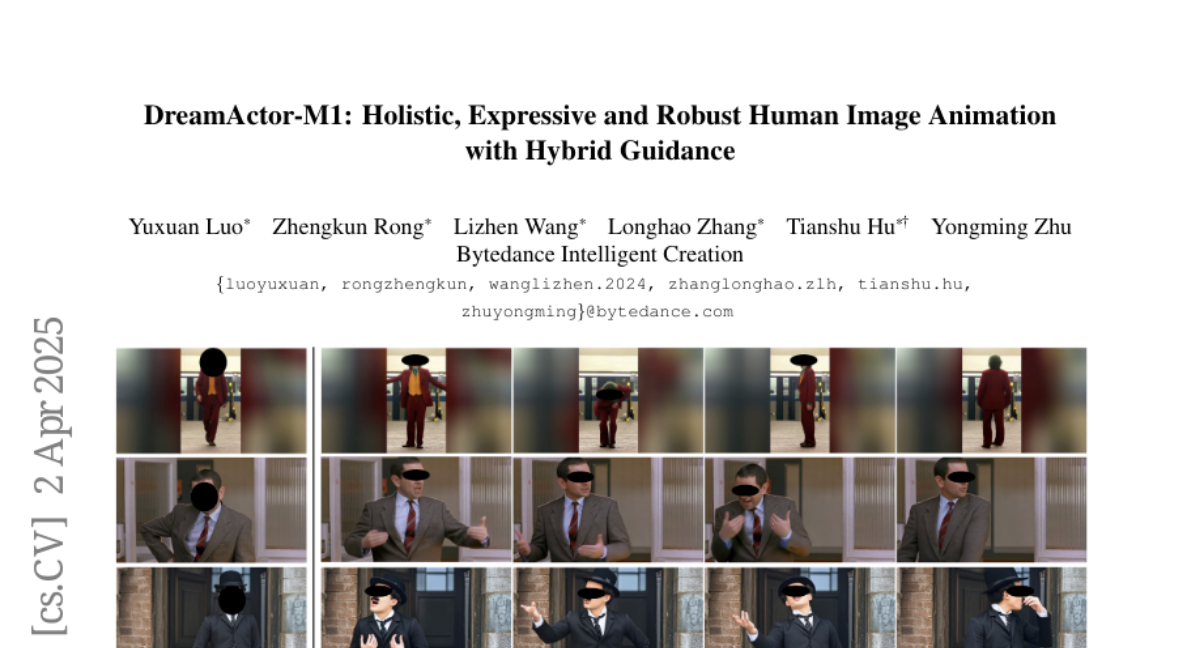

While recent image-based human animation methods achieve realistic body and facial motion synthesis, critical gaps remain in fine-grained holistic controllability, multi-scale adaptability, and long-term temporal coherence, which leads to their lower expressiveness and robustness. We propose a diffusion transformer (DiT) based framework, DreamActor-M1, with hybrid guidance to overcome these limitations. For motion guidance, our hybrid control signals that integrate implicit facial representations, 3D head spheres, and 3D body skeletons achieve robust control of facial expressions and body movements, while producing expressive and identity-preserving animations. For scale adaptation, to handle various body poses and image scales ranging from portraits to full-body views, we employ a progressive training strategy using data with varying resolutions and scales. For appearance guidance, we integrate motion patterns from sequential frames with complementary visual references, ensuring long-term temporal coherence for unseen regions during complex movements. Experiments demonstrate that our method outperforms the state-of-the-art works, delivering expressive results for portraits, upper-body, and full-body generation with robust long-term consistency. Project Page: https://grisoon.github.io/DreamActor-M1/.