DSO: Aligning 3D Generators with Simulation Feedback for Physical Soundness

Ruining Li, Chuanxia Zheng, Christian Rupprecht, Andrea Vedaldi

2025-04-01

Summary

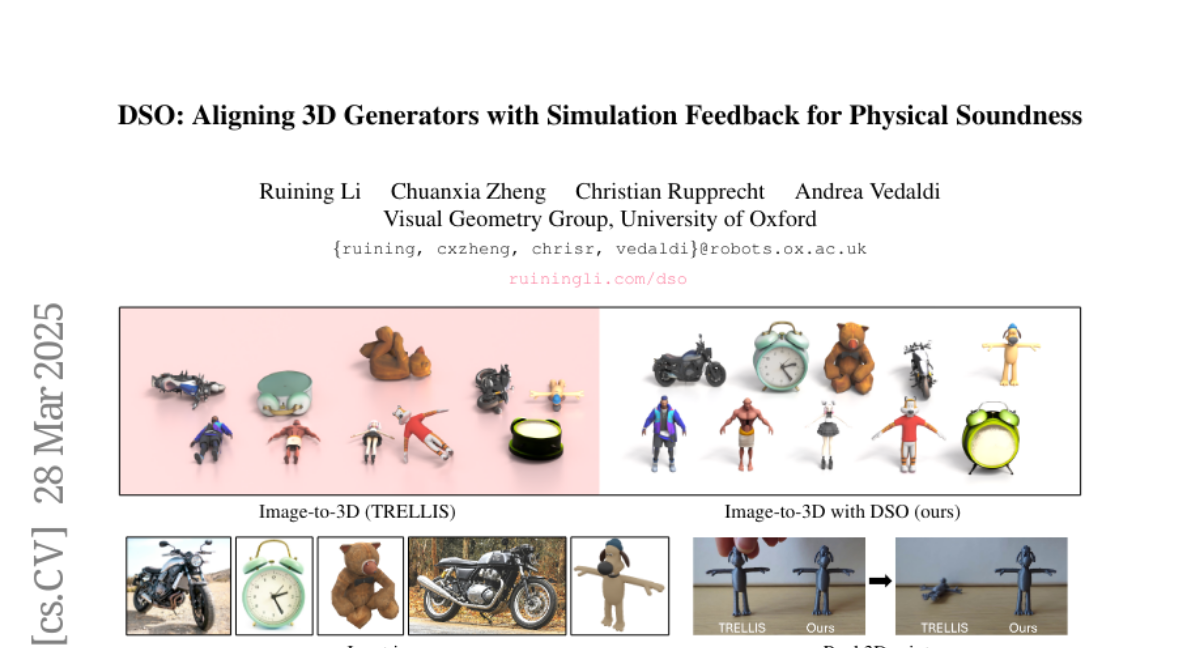

This paper is about making AI generate 3D objects that are not only visually appealing but also physically stable, like a vase that won't fall over.

What's the problem?

AI-generated 3D objects often look good but are unstable and would collapse in the real world.

What's the solution?

The researchers developed a system that uses a physics simulator to give feedback to the AI generator, encouraging it to create stable objects. It can even learn without needing real-world examples.

Why it matters?

This work matters because it can lead to AI-generated 3D objects that are actually useful in real-world applications, like design and robotics.

Abstract

Most 3D object generators focus on aesthetic quality, often neglecting physical constraints necessary in applications. One such constraint is that the 3D object should be self-supporting, i.e., remains balanced under gravity. Prior approaches to generating stable 3D objects used differentiable physics simulators to optimize geometry at test-time, which is slow, unstable, and prone to local optima. Inspired by the literature on aligning generative models to external feedback, we propose Direct Simulation Optimization (DSO), a framework to use the feedback from a (non-differentiable) simulator to increase the likelihood that the 3D generator outputs stable 3D objects directly. We construct a dataset of 3D objects labeled with a stability score obtained from the physics simulator. We can then fine-tune the 3D generator using the stability score as the alignment metric, via direct preference optimization (DPO) or direct reward optimization (DRO), a novel objective, which we introduce, to align diffusion models without requiring pairwise preferences. Our experiments show that the fine-tuned feed-forward generator, using either DPO or DRO objective, is much faster and more likely to produce stable objects than test-time optimization. Notably, the DSO framework works even without any ground-truth 3D objects for training, allowing the 3D generator to self-improve by automatically collecting simulation feedback on its own outputs.