Efficient LLaMA-3.2-Vision by Trimming Cross-attended Visual Features

Jewon Lee, Ki-Ung Song, Seungmin Yang, Donguk Lim, Jaeyeon Kim, Wooksu Shin, Bo-Kyeong Kim, Yong Jae Lee, Tae-Ho Kim

2025-04-02

Summary

This paper is about making AI models that understand both images and text work faster by getting rid of unnecessary image details.

What's the problem?

AI models that look at both images and text can be slow because they have to process a lot of information from the images.

What's the solution?

The researchers found a way to remove some of the less important image details without hurting the model's performance, which makes it faster and uses less memory.

Why it matters?

This work matters because it makes these AI models more practical to use in real-world applications.

Abstract

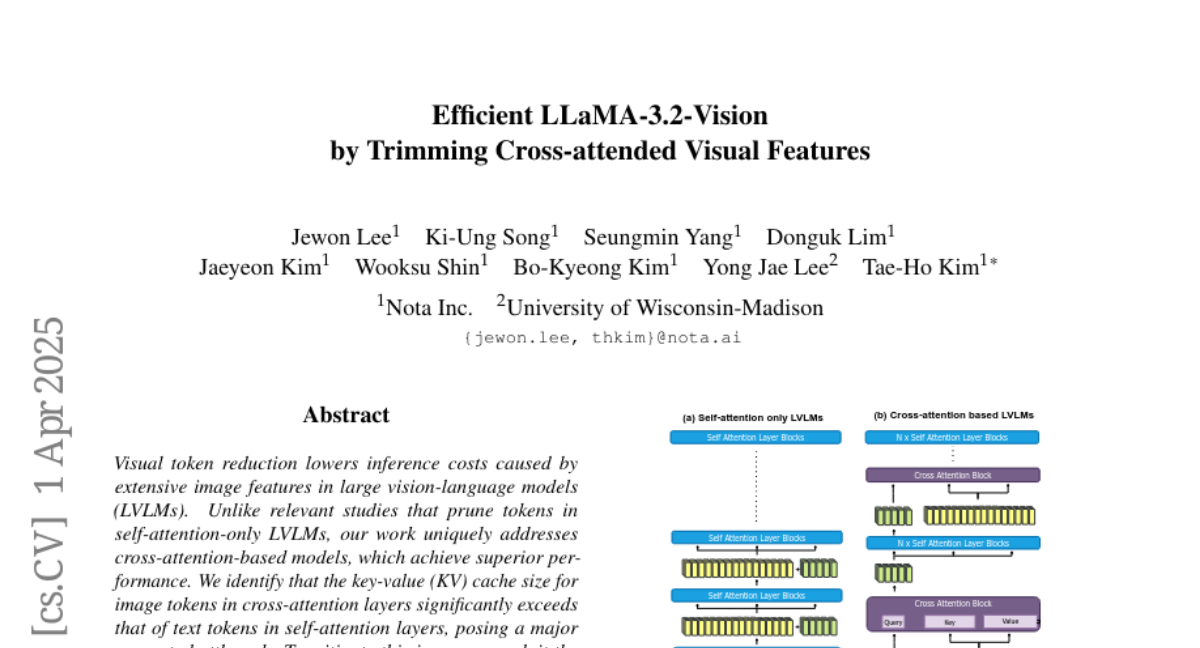

Visual token reduction lowers inference costs caused by extensive image features in large vision-language models (LVLMs). Unlike relevant studies that prune tokens in self-attention-only LVLMs, our work uniquely addresses cross-attention-based models, which achieve superior performance. We identify that the key-value (KV) cache size for image tokens in cross-attention layers significantly exceeds that of text tokens in self-attention layers, posing a major compute bottleneck. To mitigate this issue, we exploit the sparse nature in cross-attention maps to selectively prune redundant visual features. Our Trimmed Llama effectively reduces KV cache demands without requiring additional training. By benefiting from 50%-reduced visual features, our model can reduce inference latency and memory usage while achieving benchmark parity.