Ego-R1: Chain-of-Tool-Thought for Ultra-Long Egocentric Video Reasoning

Shulin Tian, Ruiqi Wang, Hongming Guo, Penghao Wu, Yuhao Dong, Xiuying Wang, Jingkang Yang, Hao Zhang, Hongyuan Zhu, Ziwei Liu

2025-06-17

Summary

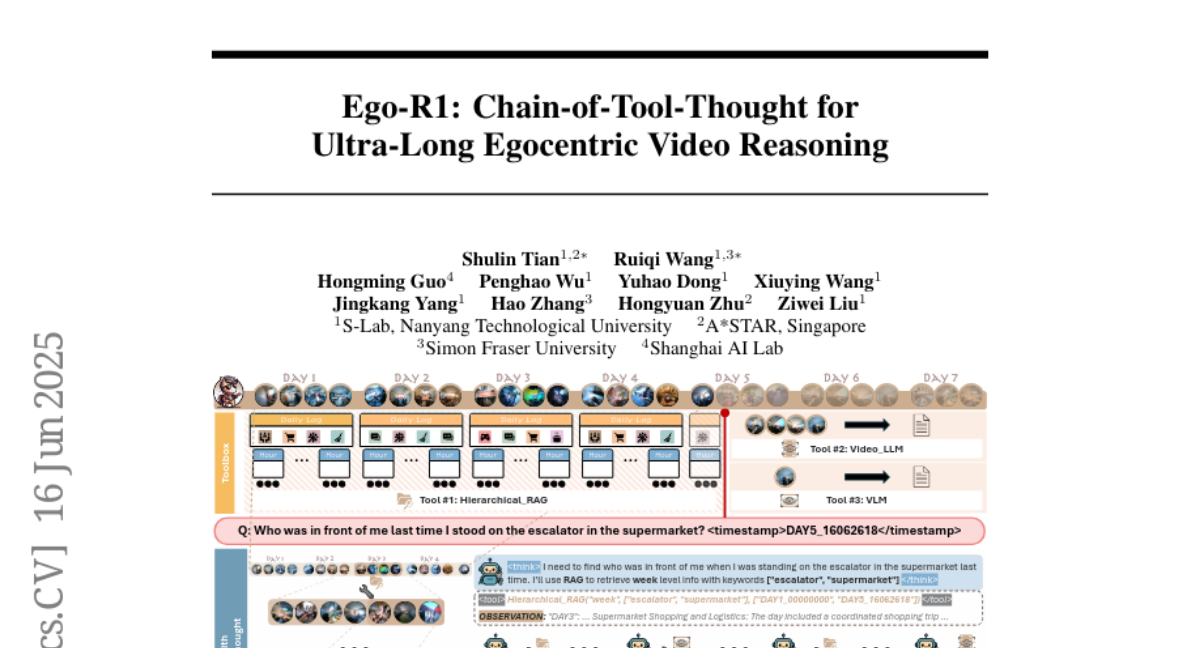

This paper talks about Ego-R1, a new AI framework that helps computers understand and reason about very long videos taken from a first-person viewpoint, like videos recorded by someone wearing a camera. It uses reinforcement learning and a special process called a chain-of-tool-thought, where the AI breaks down the video reasoning task step-by-step using various helpful tools, allowing it to handle videos spanning up to a week of recording, which is much longer than before.

What's the problem?

The problem is that most existing AI methods struggle to understand very long egocentric videos, which are videos seen from the point of view of the person wearing the camera. These videos are hard to analyze because they contain a huge amount of information and require reasoning over long periods, making it difficult for AI to keep track of important details and understand what is happening throughout the entire footage.

What's the solution?

The solution is Ego-R1's use of reinforcement learning combined with a chain-of-tool-thought approach, where the model reasons in a structured way by calling on different specialized tools during its thought process. This approach helps the AI break down big reasoning tasks into smaller, manageable steps and use the best tools for each part. By organizing the process like this, Ego-R1 can cover very long time spans in egocentric videos and improve its understanding and reasoning performance compared to earlier methods.

Why it matters?

This matters because egocentric videos are becoming more common with wearable cameras and have applications in areas like personal memory aids, assistive technology, and augmented reality. Being able to accurately and efficiently understand ultra-long videos from a first-person view helps AI provide better insights and support in these fields. Ego-R1 advances AI's ability to process long, complex videos, making it more useful for real-world, everyday tasks involving long-term video understanding.

Abstract

Ego-R1, a reinforcement learning-based framework, uses a structured tool-augmented chain-of-thought process to reason over ultra-long egocentric videos, achieving better performance than existing methods by extending time coverage to a week.