Emma-X: An Embodied Multimodal Action Model with Grounded Chain of Thought and Look-ahead Spatial Reasoning

Qi Sun, Pengfei Hong, Tej Deep Pala, Vernon Toh, U-Xuan Tan, Deepanway Ghosal, Soujanya Poria

2024-12-17

Summary

This paper talks about Emma-X, a new model designed to help robots understand and perform tasks better by combining visual and language information, allowing them to think ahead and plan their actions more effectively.

What's the problem?

Traditional methods for teaching robots to perform tasks often focus on specific jobs and struggle to adapt when faced with new environments or unfamiliar objects. While some models can understand scenes and plan actions, they have difficulty creating practical steps for robots to follow, especially when tasks require long-term planning and spatial reasoning.

What's the solution?

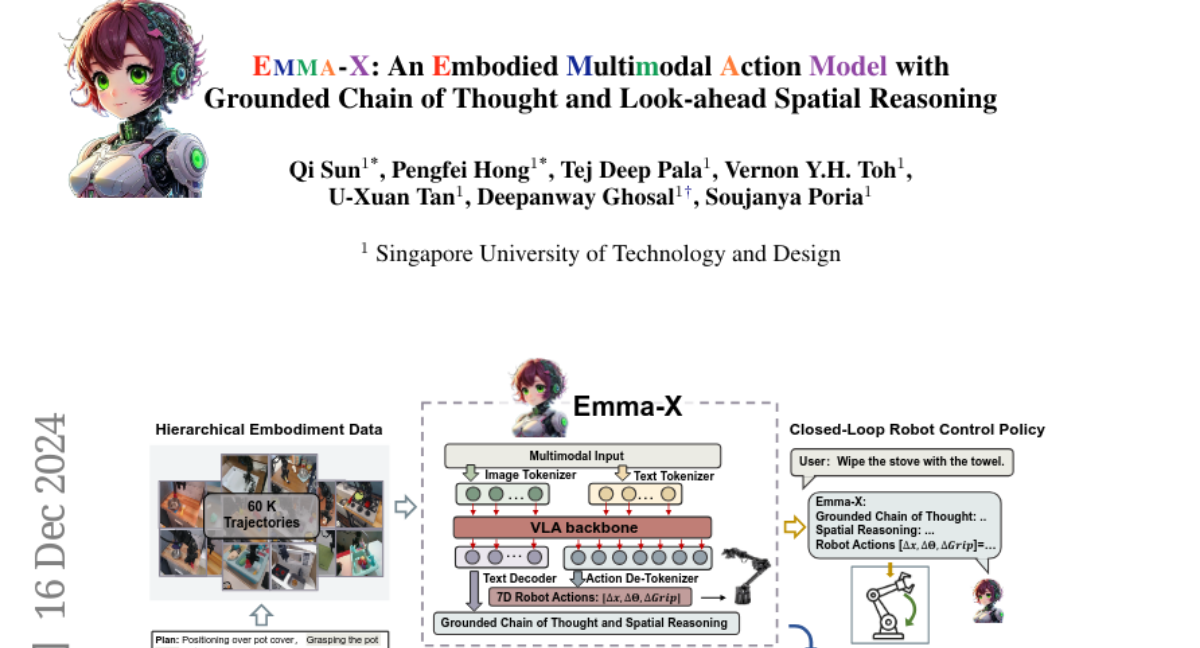

Emma-X addresses these challenges by using a combination of visual data and language instructions. It is trained on a large dataset of robot movements and uses a special technique called trajectory segmentation to improve how it understands tasks. This helps the model break down complex tasks into smaller steps, making it easier for the robot to follow instructions accurately. Emma-X also includes features that allow it to consider future actions while planning, enhancing its ability to handle real-world scenarios.

Why it matters?

This work is important because it improves how robots can learn and execute tasks in diverse settings. By enhancing their ability to reason about space and plan actions, Emma-X could lead to more capable robots that can assist in various applications, from household chores to complex industrial tasks, making them more useful in everyday life.

Abstract

Traditional reinforcement learning-based robotic control methods are often task-specific and fail to generalize across diverse environments or unseen objects and instructions. Visual Language Models (VLMs) demonstrate strong scene understanding and planning capabilities but lack the ability to generate actionable policies tailored to specific robotic embodiments. To address this, Visual-Language-Action (VLA) models have emerged, yet they face challenges in long-horizon spatial reasoning and grounded task planning. In this work, we propose the Embodied Multimodal Action Model with Grounded Chain of Thought and Look-ahead Spatial Reasoning, Emma-X. Emma-X leverages our constructed hierarchical embodiment dataset based on BridgeV2, containing 60,000 robot manipulation trajectories auto-annotated with grounded task reasoning and spatial guidance. Additionally, we introduce a trajectory segmentation strategy based on gripper states and motion trajectories, which can help mitigate hallucination in grounding subtask reasoning generation. Experimental results demonstrate that Emma-X achieves superior performance over competitive baselines, particularly in real-world robotic tasks requiring spatial reasoning.