Enabling Versatile Controls for Video Diffusion Models

Xu Zhang, Hao Zhou, Haoming Qin, Xiaobin Lu, Jiaxing Yan, Guanzhong Wang, Zeyu Chen, Yi Liu

2025-03-24

Summary

This paper is about giving people more control over the videos that AI creates.

What's the problem?

It's hard to tell AI exactly what to do when making a video, like 'make a video of a cat walking on a Canny edge' or 'make a video of a person dancing that uses keypoint control.'

What's the solution?

The researchers made a new system that lets people control different aspects of a video, like the edges, shapes, and poses of people in the video.

Why it matters?

This work matters because it makes it easier for people to create the videos they want with AI.

Abstract

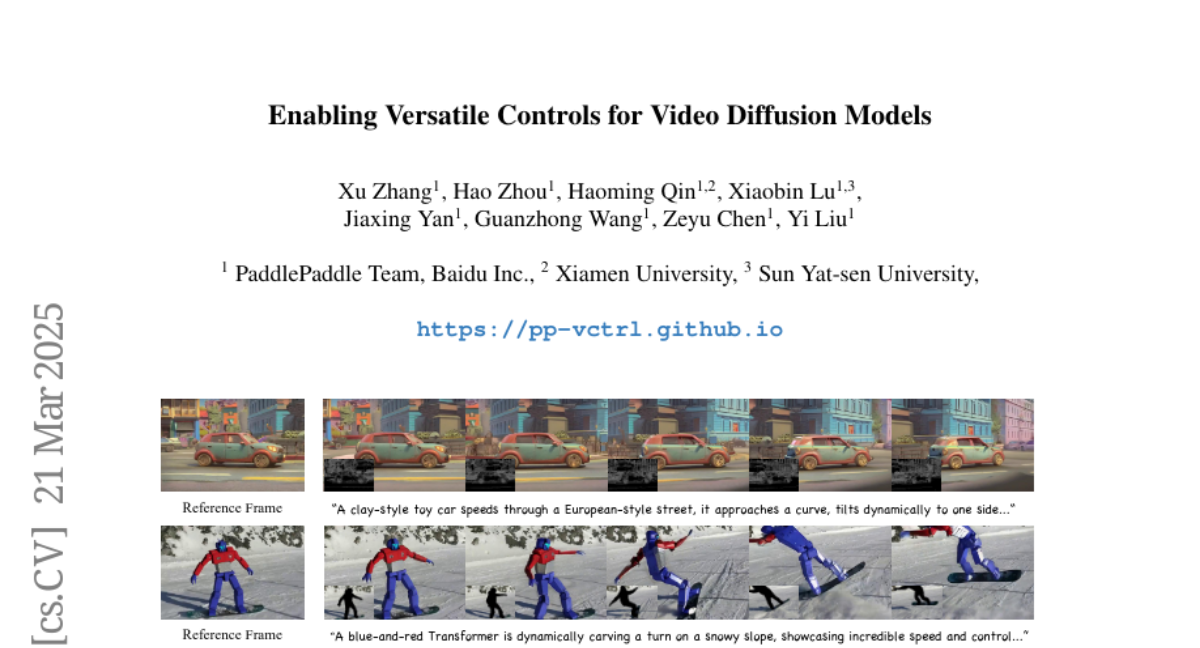

Despite substantial progress in text-to-video generation, achieving precise and flexible control over fine-grained spatiotemporal attributes remains a significant unresolved challenge in video generation research. To address these limitations, we introduce VCtrl (also termed PP-VCtrl), a novel framework designed to enable fine-grained control over pre-trained video diffusion models in a unified manner. VCtrl integrates diverse user-specified control signals-such as Canny edges, segmentation masks, and human keypoints-into pretrained video diffusion models via a generalizable conditional module capable of uniformly encoding multiple types of auxiliary signals without modifying the underlying generator. Additionally, we design a unified control signal encoding pipeline and a sparse residual connection mechanism to efficiently incorporate control representations. Comprehensive experiments and human evaluations demonstrate that VCtrl effectively enhances controllability and generation quality. The source code and pre-trained models are publicly available and implemented using the PaddlePaddle framework at http://github.com/PaddlePaddle/PaddleMIX/tree/develop/ppdiffusers/examples/ppvctrl.