Envisioning Beyond the Pixels: Benchmarking Reasoning-Informed Visual Editing

Xiangyu Zhao, Peiyuan Zhang, Kexian Tang, Hao Li, Zicheng Zhang, Guangtao Zhai, Junchi Yan, Hua Yang, Xue Yang, Haodong Duan

2025-04-04

Summary

This paper introduces a new way to test how well AI can edit images based on complex instructions and reasoning.

What's the problem?

AI models struggle with editing images when the instructions require reasoning, like understanding time, cause and effect, or spatial relationships. They also have trouble keeping the edits consistent and working with different image types.

What's the solution?

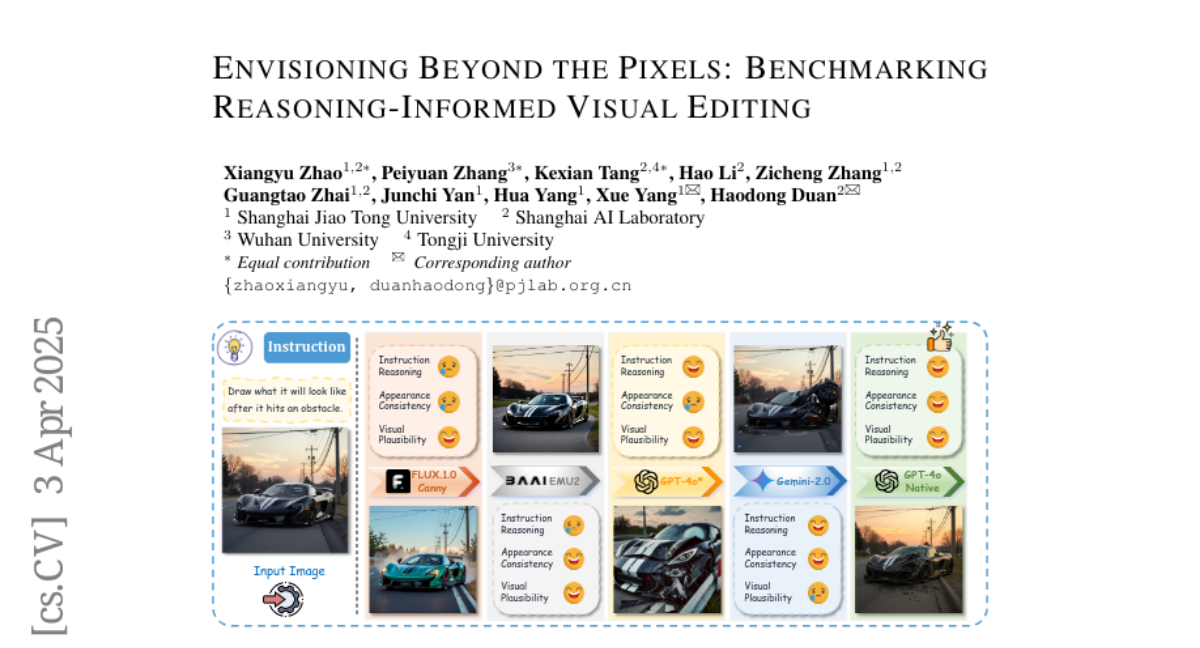

The researchers created RISEBench, a set of tests that measure how well AI can edit images based on four types of reasoning. They also developed a way to evaluate the AI's ability to follow instructions, keep the image consistent, and make the edits look realistic.

Why it matters?

This work matters because it helps us understand the limitations of AI in visual editing and provides a way to improve these models in the future.

Abstract

Large Multi-modality Models (LMMs) have made significant progress in visual understanding and generation, but they still face challenges in General Visual Editing, particularly in following complex instructions, preserving appearance consistency, and supporting flexible input formats. To address this gap, we introduce RISEBench, the first benchmark for evaluating Reasoning-Informed viSual Editing (RISE). RISEBench focuses on four key reasoning types: Temporal, Causal, Spatial, and Logical Reasoning. We curate high-quality test cases for each category and propose an evaluation framework that assesses Instruction Reasoning, Appearance Consistency, and Visual Plausibility with both human judges and an LMM-as-a-judge approach. Our experiments reveal that while GPT-4o-Native significantly outperforms other open-source and proprietary models, even this state-of-the-art system struggles with logical reasoning tasks, highlighting an area that remains underexplored. As an initial effort, RISEBench aims to provide foundational insights into reasoning-aware visual editing and to catalyze future research. Though still in its early stages, we are committed to continuously expanding and refining the benchmark to support more comprehensive, reliable, and scalable evaluations of next-generation multimodal systems. Our code and data will be released at https://github.com/PhoenixZ810/RISEBench.