ETCH: Generalizing Body Fitting to Clothed Humans via Equivariant Tightness

Boqian Li, Haiwen Feng, Zeyu Cai, Michael J. Black, Yuliang Xiu

2025-03-17

Summary

This is a collection of research paper titles related to recent advances and challenges in artificial intelligence, covering topics like image and video generation, language models, robotics, and more.

What's the problem?

The field of AI is constantly evolving, with new models and techniques emerging rapidly. Understanding the current challenges and potential solutions requires keeping up with a vast amount of research.

What's the solution?

These papers explore various solutions to improve AI systems, such as making them more efficient, robust, and capable of understanding and interacting with the world in more complex ways.

Why it matters?

These research efforts are important because they drive innovation in AI, leading to new applications and capabilities that can benefit society in areas like healthcare, education, and automation.

Abstract

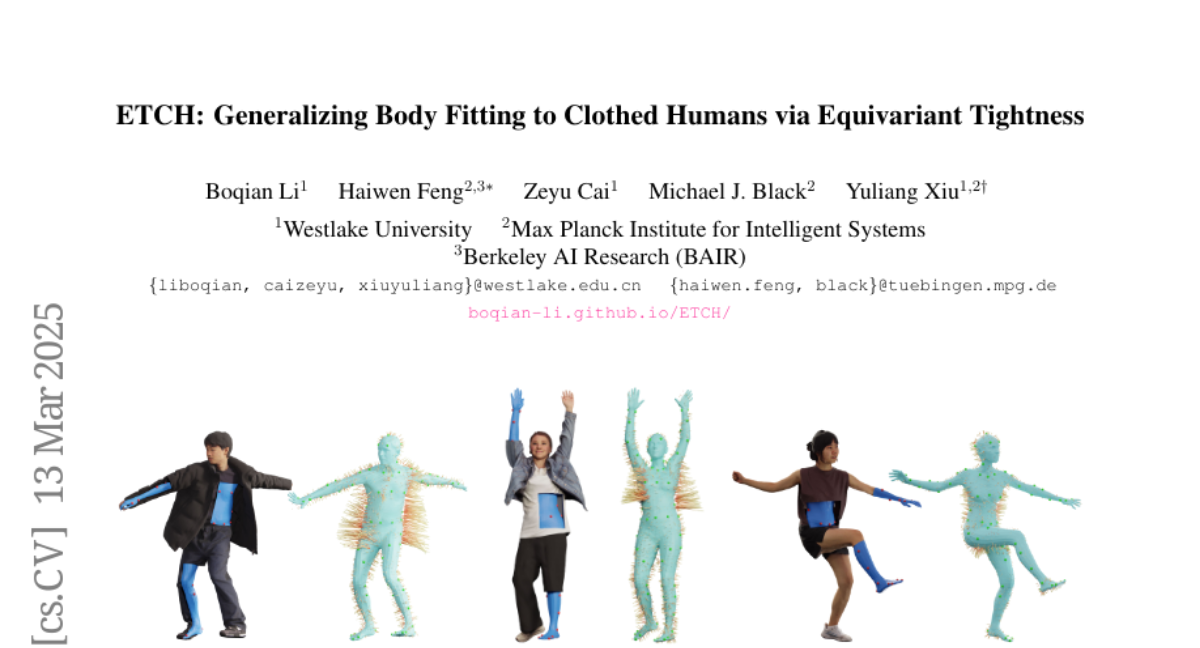

Fitting a body to a 3D clothed human point cloud is a common yet challenging task. Traditional optimization-based approaches use multi-stage pipelines that are sensitive to pose initialization, while recent learning-based methods often struggle with generalization across diverse poses and garment types. We propose Equivariant Tightness Fitting for Clothed Humans, or ETCH, a novel pipeline that estimates cloth-to-body surface mapping through locally approximate SE(3) equivariance, encoding tightness as displacement vectors from the cloth surface to the underlying body. Following this mapping, pose-invariant body features regress sparse body markers, simplifying clothed human fitting into an inner-body marker fitting task. Extensive experiments on CAPE and 4D-Dress show that ETCH significantly outperforms state-of-the-art methods -- both tightness-agnostic and tightness-aware -- in body fitting accuracy on loose clothing (16.7% ~ 69.5%) and shape accuracy (average 49.9%). Our equivariant tightness design can even reduce directional errors by (67.2% ~ 89.8%) in one-shot (or out-of-distribution) settings. Qualitative results demonstrate strong generalization of ETCH, regardless of challenging poses, unseen shapes, loose clothing, and non-rigid dynamics. We will release the code and models soon for research purposes at https://boqian-li.github.io/ETCH/.