Explaining Sources of Uncertainty in Automated Fact-Checking

Jingyi Sun, Greta Warren, Irina Shklovski, Isabelle Augenstein

2025-05-28

Summary

This paper talks about CLUE, a system that helps AI explain why it is unsure about certain facts when checking if information is true or false.

What's the problem?

The problem is that when AI models are used for fact-checking, they sometimes can't clearly explain why they're uncertain about an answer, which makes it hard for people to trust or understand the results.

What's the solution?

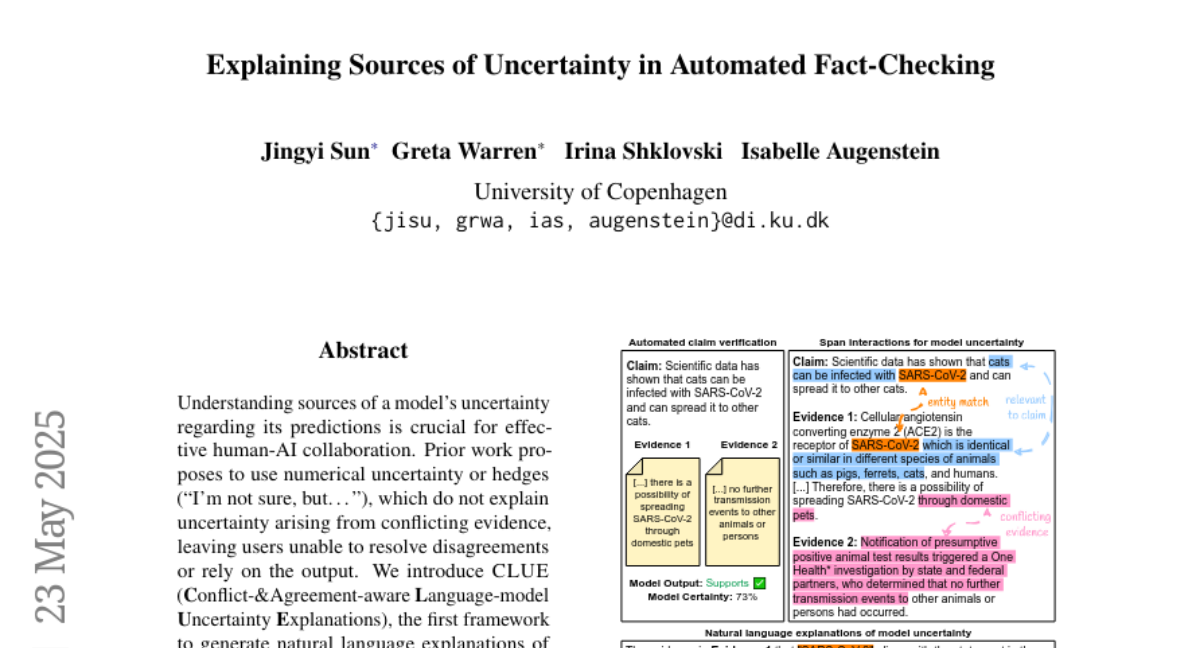

To solve this, the researchers developed CLUE, which looks at different parts of the text to find where information agrees or disagrees, and then explains in simple language why the AI is unsure. This makes the AI's reasoning more transparent and easier to follow.

Why it matters?

This is important because it helps people better understand and trust automated fact-checking, making it more useful for things like news verification, research, and fighting misinformation.

Abstract

CLUE generates natural language explanations for a language model's uncertainty by identifying and explaining conflicts and agreements in text spans, enhancing the clarity and helpfulness of explanations in tasks like fact-checking.