Exploring the Effect of Reinforcement Learning on Video Understanding: Insights from SEED-Bench-R1

Yi Chen, Yuying Ge, Rui Wang, Yixiao Ge, Lu Qiu, Ying Shan, Xihui Liu

2025-04-02

Summary

This paper investigates how using reinforcement learning (RL) can improve AI models' ability to understand videos.

What's the problem?

AI models struggle with understanding complex videos that require both seeing and thinking, and it's unclear how RL can help.

What's the solution?

The researchers created a test (SEED-Bench-R1) and found that RL can improve how AI models see videos, but it sometimes makes their reasoning less logical.

Why it matters?

This work matters because it helps us understand how to build better AI models that can understand videos, which is useful for tasks like video analysis and robotics.

Abstract

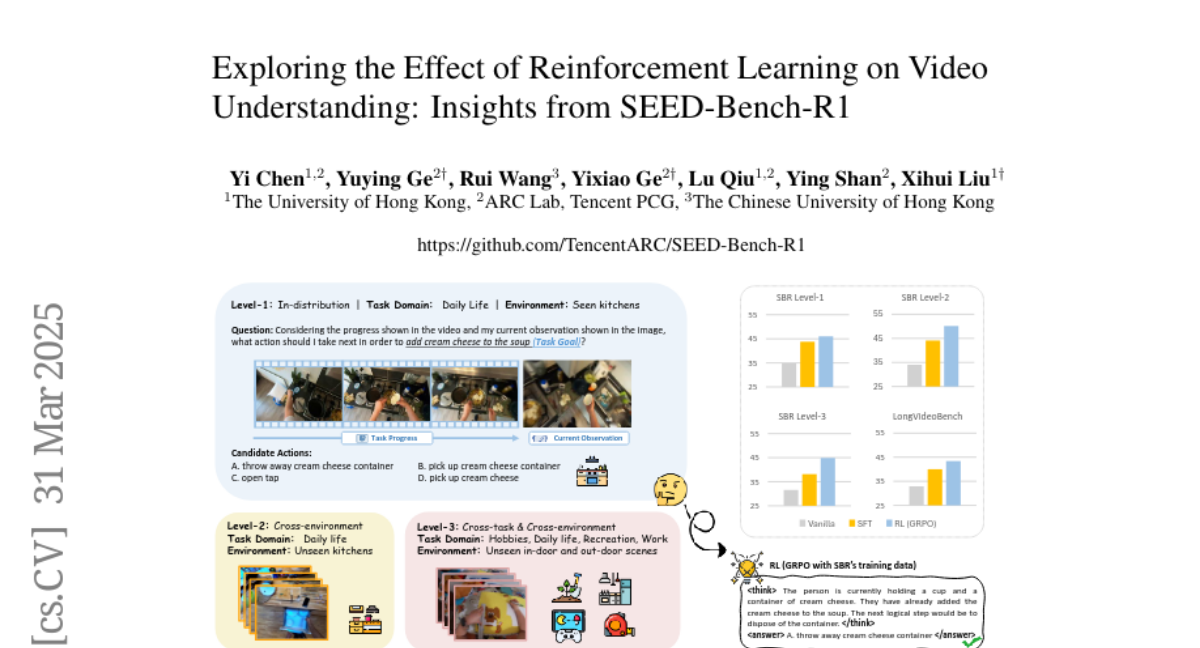

Recent advancements in Chain of Thought (COT) generation have significantly improved the reasoning capabilities of Large Language Models (LLMs), with reinforcement learning (RL) emerging as an effective post-training approach. Multimodal Large Language Models (MLLMs) inherit this reasoning potential but remain underexplored in tasks requiring both perception and logical reasoning. To address this, we introduce SEED-Bench-R1, a benchmark designed to systematically evaluate post-training methods for MLLMs in video understanding. It includes intricate real-world videos and complex everyday planning tasks in the format of multiple-choice questions, requiring sophisticated perception and reasoning. SEED-Bench-R1 assesses generalization through a three-level hierarchy: in-distribution, cross-environment, and cross-environment-task scenarios, equipped with a large-scale training dataset with easily verifiable ground-truth answers. Using Qwen2-VL-Instruct-7B as a base model, we compare RL with supervised fine-tuning (SFT), demonstrating RL's data efficiency and superior performance on both in-distribution and out-of-distribution tasks, even outperforming SFT on general video understanding benchmarks like LongVideoBench. Our detailed analysis reveals that RL enhances visual perception but often produces less logically coherent reasoning chains. We identify key limitations such as inconsistent reasoning and overlooked visual cues, and suggest future improvements in base model reasoning, reward modeling, and RL robustness against noisy signals.