Exploring the Latent Capacity of LLMs for One-Step Text Generation

Gleb Mezentsev, Ivan Oseledets

2025-05-28

Summary

This paper talks about how large language models, or LLMs, can actually write long pieces of text all at once, instead of creating them word by word like they usually do.

What's the problem?

The problem is that most current language models generate text slowly because they add one word at a time, which can be inefficient and time-consuming, especially for longer texts.

What's the solution?

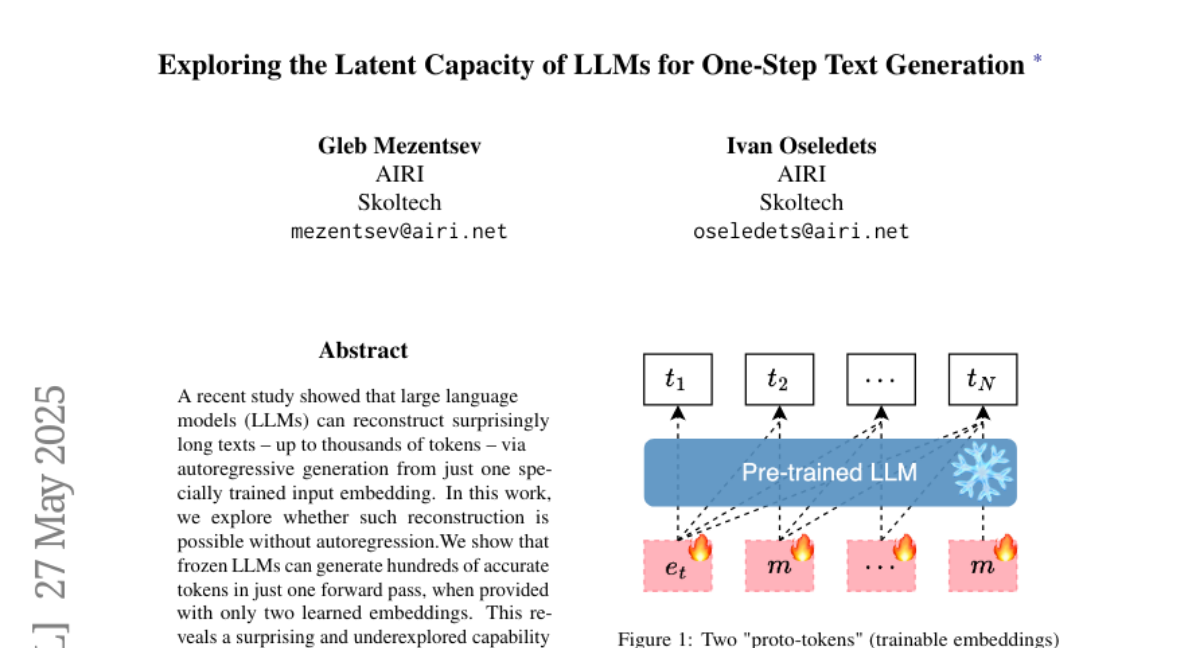

The researchers discovered that by using special learned representations, called embeddings, these models can produce entire chunks of text in just one step, skipping the usual process of building sentences word by word.

Why it matters?

This matters because it could make AI text generation much faster and more efficient, which would be helpful for things like chatbots, writing assistants, and any application that needs to quickly create large amounts of text.

Abstract

LLMs can generate long text segments in a single forward pass using learned embeddings, revealing a capability for multi-token generation without iterative decoding.