FedNano: Toward Lightweight Federated Tuning for Pretrained Multimodal Large Language Models

Yao Zhang, Hewei Gao, Haokun Chen, Weiguo Li, Yunpu Ma, Volker Tresp

2025-06-19

Summary

This paper talks about FedNano, a system that helps large multimodal language models adapt to different users by combining a big central model with small edge modules called NanoEdge that personalize the AI on local devices.

What's the problem?

The problem is that large language models are very big and complex, making it hard to customize them for many users while keeping their privacy and handling limited computing power on user devices.

What's the solution?

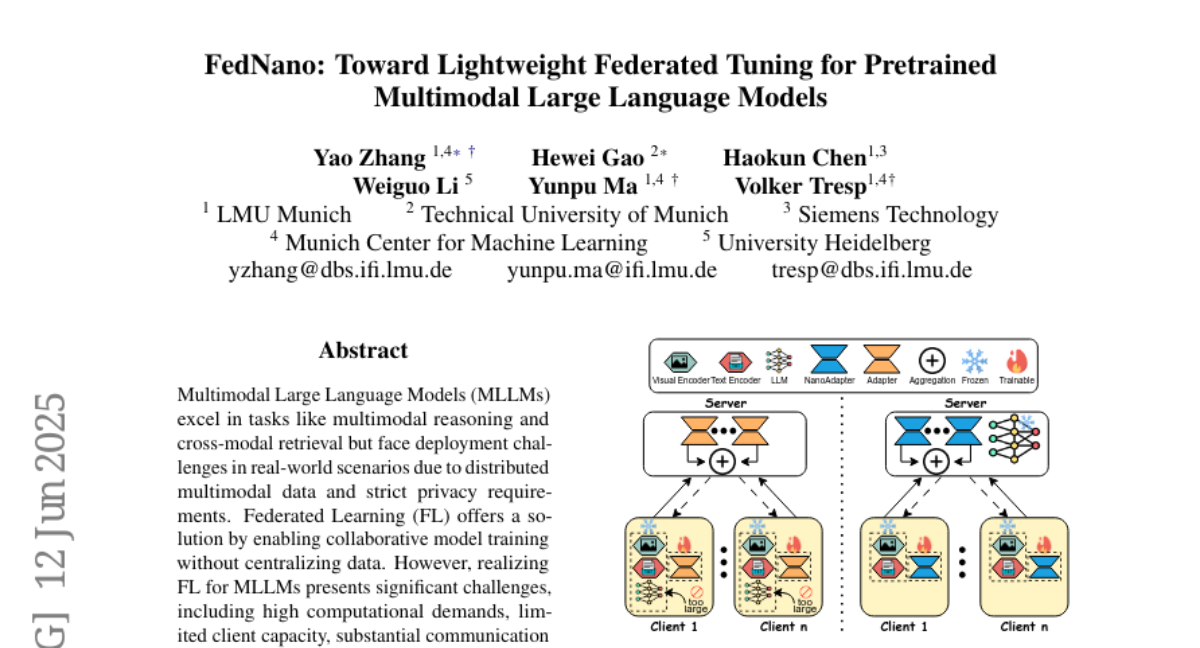

The researchers created a federated learning framework where the large model stays on powerful central servers, and small NanoEdge modules on client devices learn user-specific features. This way, the models adapt locally without sending private data to the server, addressing privacy and scalability challenges.

Why it matters?

This matters because it enables large AI models to be personalized safely and efficiently for many users, improving performance while protecting privacy and making it possible to run smart applications on smaller devices.

Abstract

FedNano is a federated learning framework that centralizes large language models on servers and uses NanoEdge modules for client-specific adaptation, addressing scalability and privacy issues.