Fine-Tune an SLM or Prompt an LLM? The Case of Generating Low-Code Workflows

Orlando Marquez Ayala, Patrice Bechard, Emily Chen, Maggie Baird, Jingfei Chen

2025-06-02

Summary

This paper talks about whether it's better to train a small language model specifically for a task or just use a big language model with instructions, especially when creating low-code workflows.

What's the problem?

The problem is that people want to use AI to help generate structured outputs, like step-by-step instructions for computer tasks, but it's not clear if using a big, general AI with prompts or a smaller, specialized AI that has been fine-tuned will give better results.

What's the solution?

The researchers compared both approaches and found that when you fine-tune a small language model for these kinds of tasks, it actually produces higher quality results than just prompting a large language model, and it also uses fewer tokens, which means it's cheaper and more efficient.

Why it matters?

This is important because it shows that smaller, focused AI models can sometimes do a better job than larger, more expensive ones, especially for specific tasks, which can save time and money for businesses and developers.

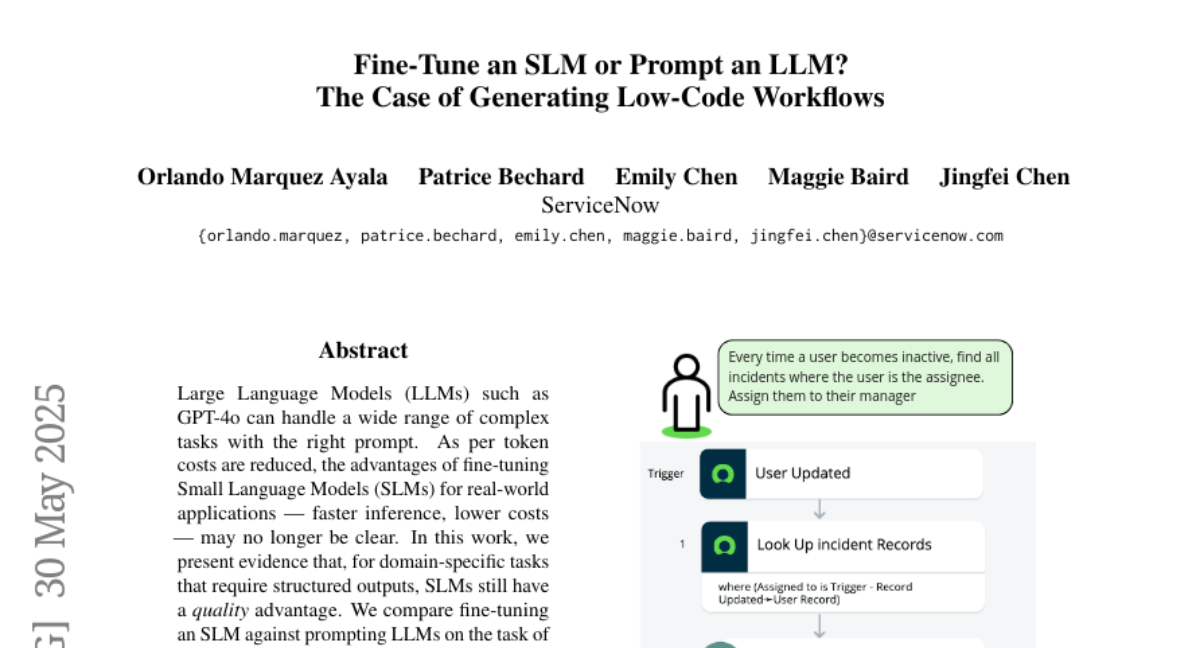

Abstract

Fine-tuning Small Language Models yields higher quality for structured output tasks compared to prompting Large Language Models, despite reduced token costs.