FitDiT: Advancing the Authentic Garment Details for High-fidelity Virtual Try-on

Boyuan Jiang, Xiaobin Hu, Donghao Luo, Qingdong He, Chengming Xu, Jinlong Peng, Jiangning Zhang, Chengjie Wang, Yunsheng Wu, Yanwei Fu

2024-11-19

Summary

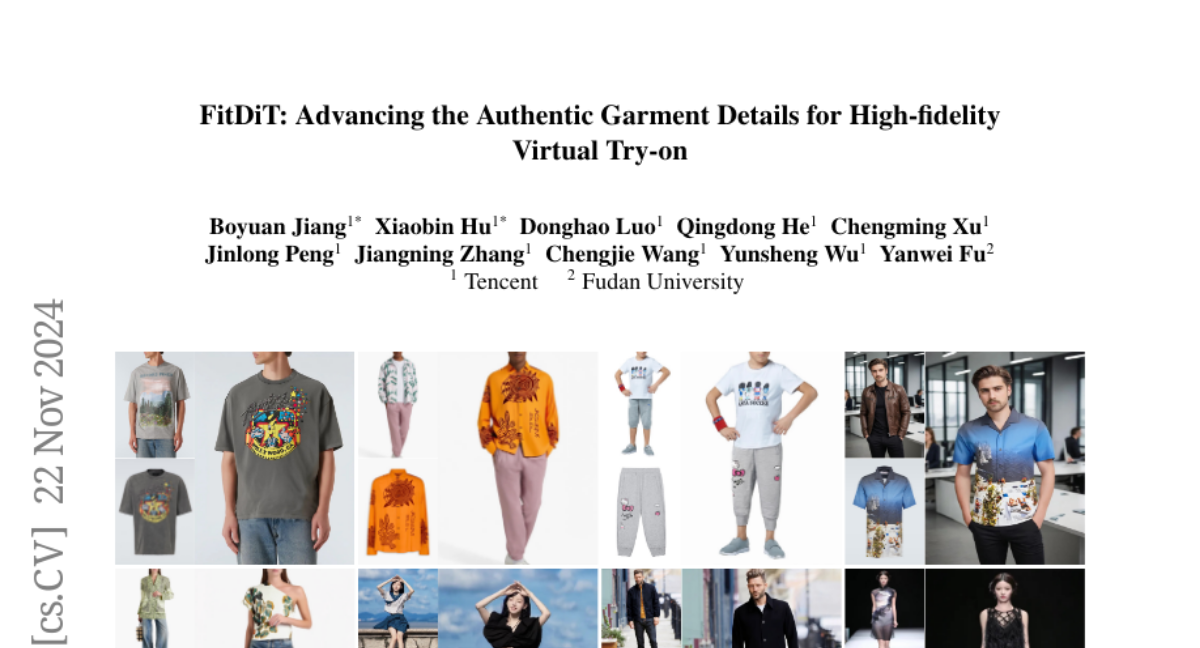

This paper introduces FitDiT, a new technique designed to improve virtual try-on experiences by enhancing the details and fit of garments in high-fidelity images.

What's the problem?

While virtual try-on technology has improved, it still struggles with accurately displaying garment textures and ensuring that clothes fit well on different body types. Many existing methods fail to maintain the realistic appearance of fabrics and can produce ill-fitting results, especially when trying on clothes from different categories.

What's the solution?

FitDiT addresses these issues by using advanced techniques to enhance garment details and improve fitting accuracy. It includes a garment texture extractor that captures rich details like patterns and textures, as well as a frequency-domain learning method to retain high-frequency details. To ensure that garments fit properly, FitDiT uses a special mask strategy that adapts to the correct size of the clothing item, preventing it from looking oversized or undersized. The method has been tested extensively and shows superior performance compared to other existing virtual try-on models.

Why it matters?

This research is significant because it advances the field of virtual try-on technology, making it more realistic and user-friendly. By improving how clothes are displayed and ensuring they fit better, FitDiT can enhance online shopping experiences, helping customers make more informed decisions about their purchases.

Abstract

Although image-based virtual try-on has made considerable progress, emerging approaches still encounter challenges in producing high-fidelity and robust fitting images across diverse scenarios. These methods often struggle with issues such as texture-aware maintenance and size-aware fitting, which hinder their overall effectiveness. To address these limitations, we propose a novel garment perception enhancement technique, termed FitDiT, designed for high-fidelity virtual try-on using Diffusion Transformers (DiT) allocating more parameters and attention to high-resolution features. First, to further improve texture-aware maintenance, we introduce a garment texture extractor that incorporates garment priors evolution to fine-tune garment feature, facilitating to better capture rich details such as stripes, patterns, and text. Additionally, we introduce frequency-domain learning by customizing a frequency distance loss to enhance high-frequency garment details. To tackle the size-aware fitting issue, we employ a dilated-relaxed mask strategy that adapts to the correct length of garments, preventing the generation of garments that fill the entire mask area during cross-category try-on. Equipped with the above design, FitDiT surpasses all baselines in both qualitative and quantitative evaluations. It excels in producing well-fitting garments with photorealistic and intricate details, while also achieving competitive inference times of 4.57 seconds for a single 1024x768 image after DiT structure slimming, outperforming existing methods.