Florenz: Scaling Laws for Systematic Generalization in Vision-Language Models

Julian Spravil, Sebastian Houben, Sven Behnke

2025-03-19

Summary

This paper explores how to make AI models that understand both images and language work well in different languages, even if they are only trained on one language.

What's the problem?

Current AI models that work with images and language often struggle when dealing with multiple languages, sacrificing performance for multilingual abilities.

What's the solution?

The researchers created Florenz, an AI model trained on a single language but designed to generalize to other languages. They tested how well it could understand images and captions in different languages, even when it was only trained on one.

Why it matters?

This work is important because it shows that AI models can be effective in multiple languages without needing to be trained on all of them, making them more efficient and easier to use.

Abstract

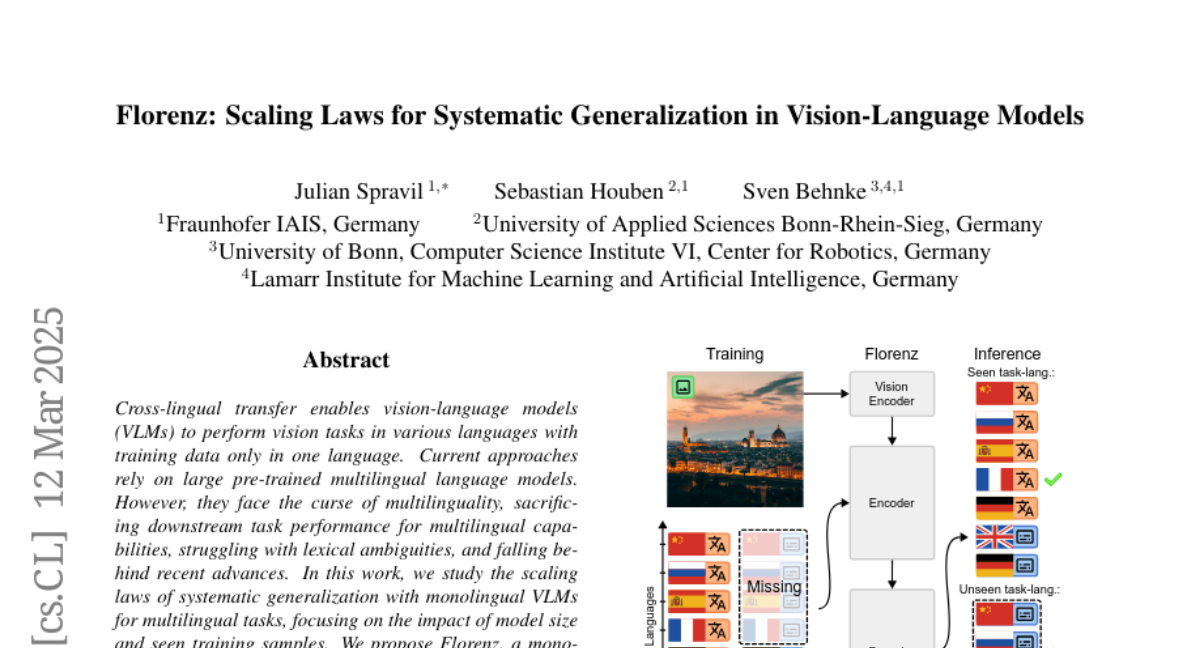

Cross-lingual transfer enables vision-language models (VLMs) to perform vision tasks in various languages with training data only in one language. Current approaches rely on large pre-trained multilingual language models. However, they face the curse of multilinguality, sacrificing downstream task performance for multilingual capabilities, struggling with lexical ambiguities, and falling behind recent advances. In this work, we study the scaling laws of systematic generalization with monolingual VLMs for multilingual tasks, focusing on the impact of model size and seen training samples. We propose Florenz, a monolingual encoder-decoder VLM with 0.4B to 11.2B parameters combining the pre-trained VLM Florence-2 and the large language model Gemma-2. Florenz is trained with varying compute budgets on a synthetic dataset that features intentionally incomplete language coverage for image captioning, thus, testing generalization from the fully covered translation task. We show that not only does indirectly learning unseen task-language pairs adhere to a scaling law, but also that with our data generation pipeline and the proposed Florenz model family, image captioning abilities can emerge in a specific language even when only data for the translation task is available. Fine-tuning on a mix of downstream datasets yields competitive performance and demonstrates promising scaling trends in multimodal machine translation (Multi30K, CoMMuTE), lexical disambiguation (CoMMuTE), and image captioning (Multi30K, XM3600, COCO Karpathy).